Agile Principles for Performance Evaluation

Chapter: Performance Engineering

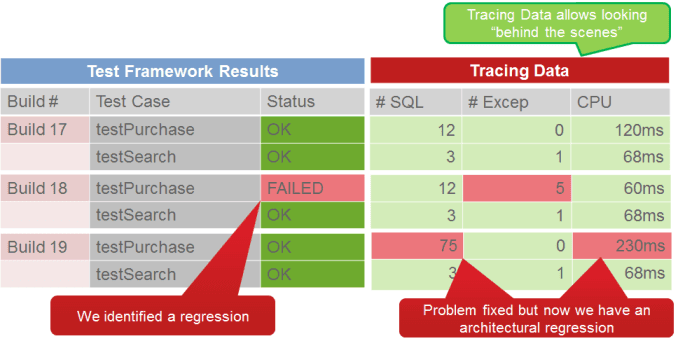

Recent advances in agile software development and best practices, such as agile continuous integration and test-driven development, make it easier to determine performance metrics at the code and component level during development. For instance, we can use a unit test to examine the metrics for any given component continually, checking execution time, number of database queries executed, or CPU usage every time there's a programmatic change. This way any problems introduced during development can be diagnosed and fixed right away, allowing us to build better software—with fewer issues making it into the load-testing phase.

Figure 3.2 shows the benefits of a unit-test framework that allows us to verify the functionality of code. For most companies, it is any easy step to add tracing data to test metrics already used for performance analysis, scalability, and component behavior. Regressions or problems with specific implementations can be identified with the next test run.

Transition from Traditional to Continuous Performance Engineering

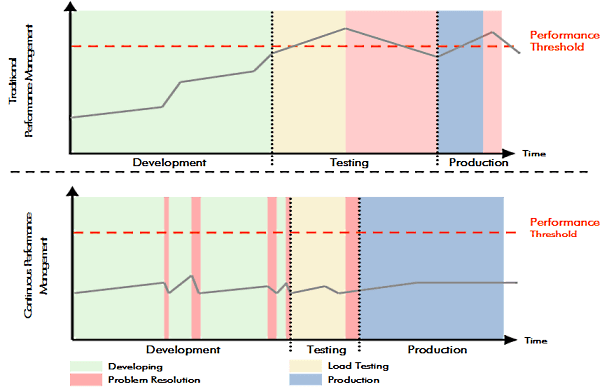

Continuously verifying architectural metrics and fixing problems as they happen in development leads to shorter throughput times in load and performance tests because we are already testing a higher-quality software product. In addition, automation in test and build management makes it possible to automate a large number of testing tasks, which can then be regularly implemented as development progresses.

Figure 3.3 compares the traditional approach to performance management with the continuous approach. Instead of one long testing phase with an additional long fixing phase at the end of the development cycle, we've implemented continuous performance testing during development, thus achieving a shorter testing phase (with a resultant long fixing phase before we can release the product). Integrating performance engineering into development slightly prolongs development, as it is an additional task. But we save so much on testing and are able to achieve such a consistently high-quality product that the gain far outweighs the price.

Transitioning from traditional to continuous performance engineering requires some effort, not unlike adopting agile development methodologies. I've worked with companies that made both transitions, which we can refer to as the full transition. The key benefit we saw was not simply a shorter end of the release testing phase. In fact, intense performance and load testing are still necessary. The main advantage we experienced was in consistently higher software quality and a nearly trivial fixing phase after the final performance tests, which allowed the engineering teams to plan with greater confidence.

Now let's have a closer look at the three most important methods of modern performance engineering during development: dynamic architecture validation in the development environment, small-scale performance tests in continuous integration, as well as enforcement of development best practices to avoid common performance problems. We can't say it enough times: both large-scale load testing and traditional performance testing remain essential elements of performance engineering, and are thoroughly covered in the upcoming Load Testing—Essential and Not Difficult section.

Table of Contents

Application Performance Concepts

Memory Management

How Java Garbage Collection Works

The Impact of Garbage Collection on application performance

Reducing Garbage Collection Pause time

Making Garbage Collection faster

Not all JVMS are created equal

Analyzing the Performance impact of Memory Utilization and Garbage Collection

The different kinds of Java memory leaks and how to analyze them

High Memory utilization and their root causes

Classloader-releated Memory Issues

Performance Engineering

Virtualization and Cloud Performance

Try it free