In my last blog article, I used Activison’s 1980’s blockbuster video game Pitfall to highlight the Pitfalls of Traditional Synthetic Monitoring. I argued that traditional synthetic monitoring can be described as “disconnected synthetic monitoring” since today these technologies overwhelmingly operate in “monitoring silos”. I also highlighted three fundamental limitations (or pitfalls) that are inherent in synthetic monitoring: 1. detected issues will always require external validation, 2. alerts cannot tell you the business impact of an incident, and 3. results from synthetic monitoring cannot tell you the root cause of a problem. In this follow up blog post, I want to outline three additional pitfalls of traditional synthetic monitoring and more importantly, offer some practical solutions to overcome them! (Shameless self-promoting plug: if you haven’t read that article, you should do so now.)

As we all know, web and mobile application technology has exploded over the last 10 years. Applications are no longer silos in-and-of-themselves but are often part a larger ecosystem of software that work together with a single goal—deliver amazing experiences to customers. This means there are lots of dependencies, dozens or even hundreds of microservices, multiple operating environments (cloud and data centers), with customers using a myriad of devices such as laptops, tablets, phones, kiosks, and even IoT-enabled gadgets. (Think about the last time you made a hotel reservation, deposited a check, or ordered food!)

Unfortunately, synthetic monitoring has not been able to keep up. There has been innovation in synthetic monitoring, for sure, but the increased pace of app updates and infrastructure changes, multiple entry points available to customers to access your online services, not to mention the diverse set of devices being used to access them, and the unique and personalized experiences they provide makes scripting for every possible scenario challenging. Actually, it’s impossible! Let’s look at some of these.

The right hand doesn’t always let the left hand know what it’s doing!

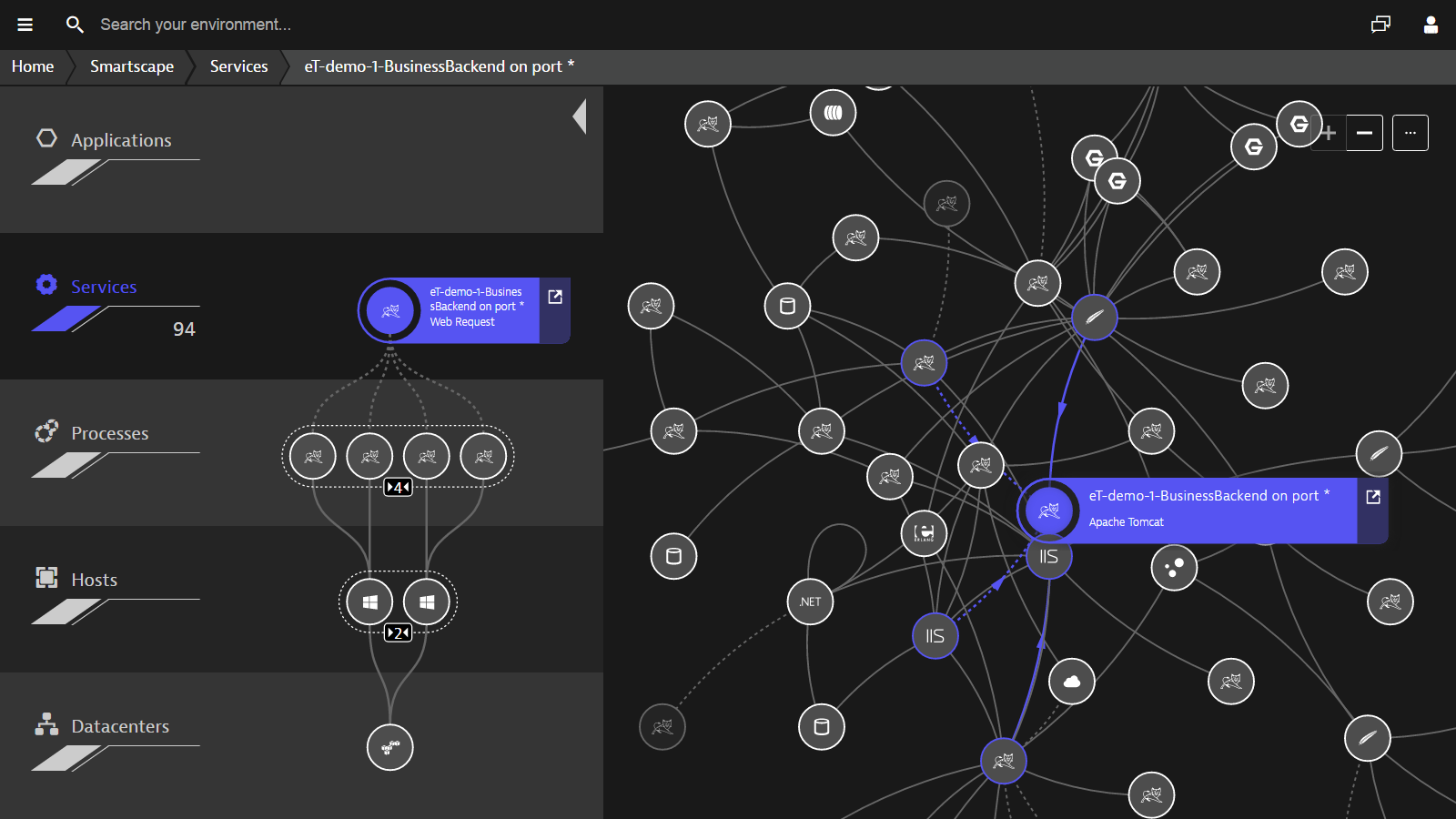

Applications and infrastructures (cloud, data center, hybrid) are getting more complex and more dynamic. Not only are DevOps teams leveraging automation to test and release code in both the cloud and managed data centers, the code itself is able to spin up and turn down instances on-the-fly without even your knowledge. How can your synthetic scripts keep up with these rapid and dynamic changes?

More importantly, when performance and availability of an application are impacted, how can you easily isolate the root-cause, much less understand how end-users are impacted when synthetic monitoring is often siloed from other monitoring data? When changes occur, synthetic monitoring may become unreliable and therefore other tools are necessary to validate issues when the arise until the synthetic scripts can be updated. There’s got to be an easier way. Pitfall #4: traditional synthetic monitoring cannot keep up with dynamic and continuously changing applications.

Mobile is now the leader of online traffic!

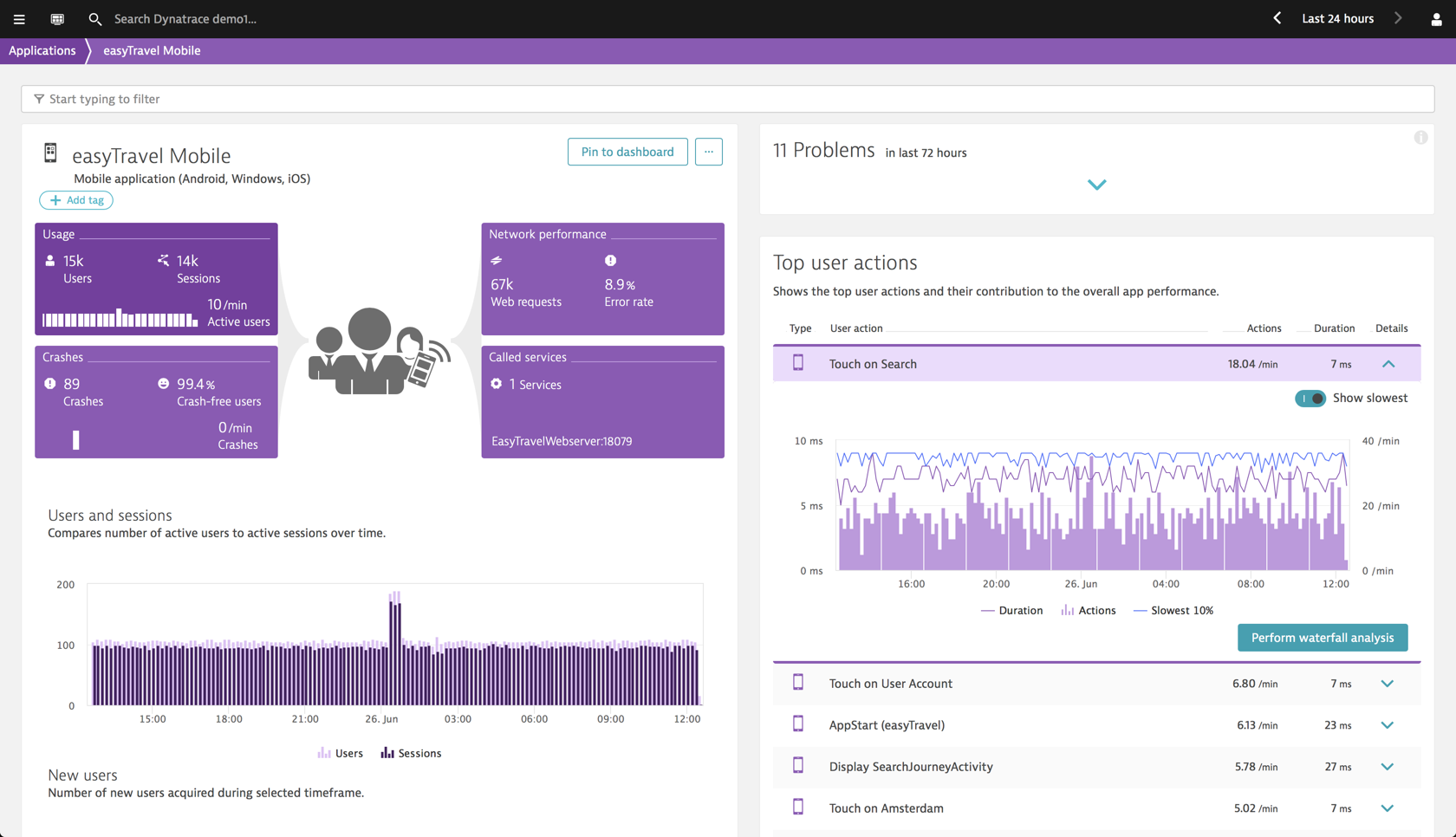

At the end of 2016, mobile devices surpassed desktop web traffic for the first time…and that upward trend has continued since. StatCounter’s latest data show that 57% of all traffic comes from a mobile/tablet device…and for some industries like travel and hospitality, it is higher! This means that mobile experiences are now the leading influencer in how your users perceive your brand and whether or not they will continue using your service/product. If monitoring the digital experience from your mobile visitors is not a key component of your monitoring strategy, then you are likely missing out on at least half of your traffic/users.

Synthetic monitoring is great for keeping an eye on the availability of the APIs used by your mobile apps. It is also good at simulating different devices and wireless speeds, but it can’t provide real insight into the mobile experience nor over-the-air carrier performance of your mobile users. Any mobile monitoring strategy needs to be able to provide holistic insight into performance, availability, and error analysis (crashes, JS errors, etc.) of both mobile apps and mobile optimized (or responsive) sites. Pitfall #5: traditional synthetic monitoring cannot not provide adequate coverage of your mobile users’ experience.

User expectations are changing!

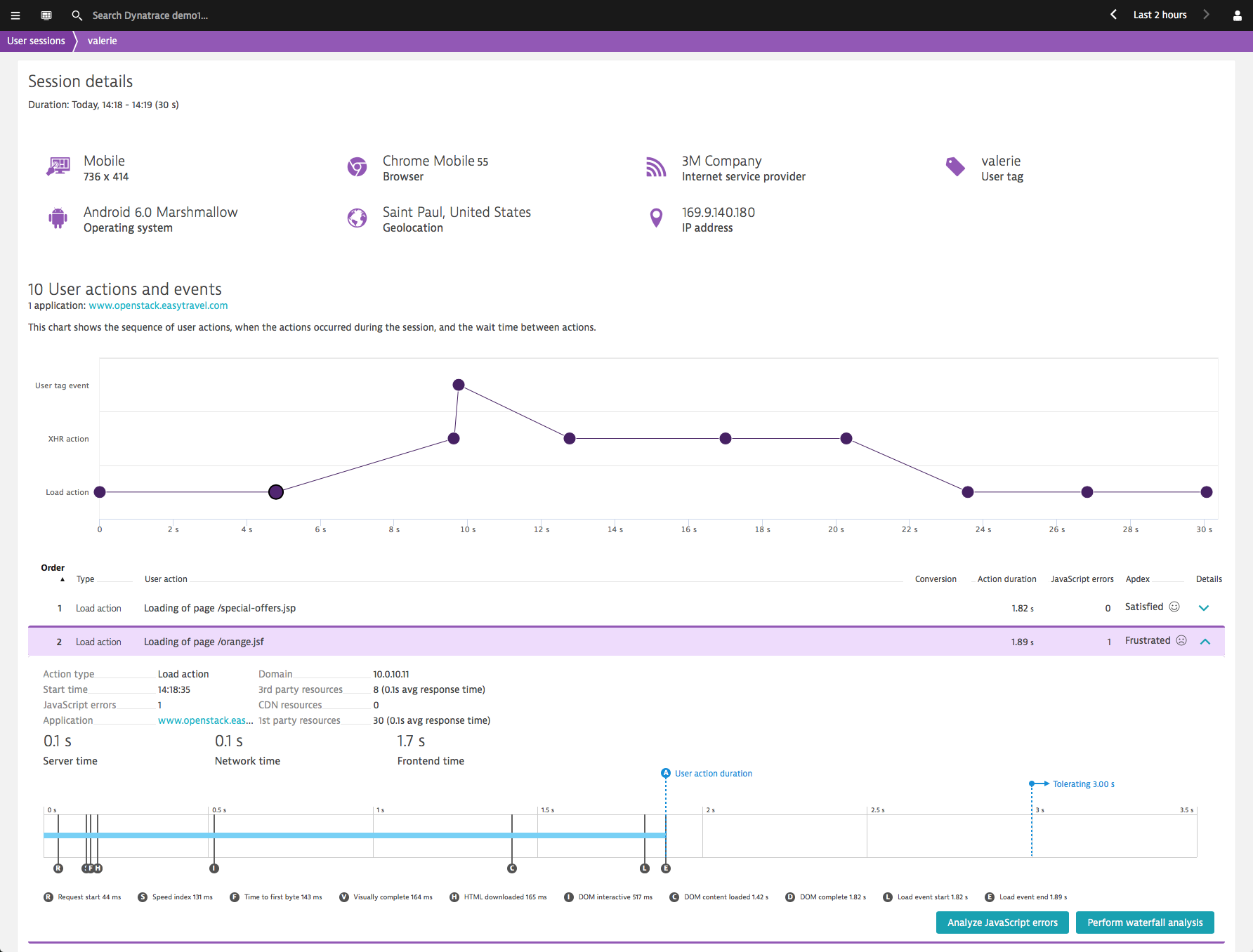

The online equivalent of customer service is no longer just a high performing site but now requires delivering engaging and personalized content. The latest research shows that 88% of companies find their customers expect personalized digital experiences. This is where an application remembers your preferences, can make intelligence recommendations, and provide seamless experience between devices and services. To deliver these highly customized journeys, a complex environment of different technology stacks, 3rd-party integrations, big-data analytics, and on-demand content are being used. We’re finding that many websites have up to 2/3 of their content coming from 3rd parties and a single web transaction can touch up to 35 different technologies!

It is next to impossible to record and simulate every step of these unique journeys…and what if your highest value customer out of hundreds had a negative experience? Synthetic monitoring, by definition, is static and predictable; whereas, dynamic applications are anything but. A modern digital experience monitoring (DEM) strategy needs to tightly integrate capturing these dynamic experiences with the consistency of baselining key user journeys. Pitfall #6: traditional synthetic monitoring is limited to just the scripted journeys you record, missing out on hundreds or even thousands of other important user journeys.

Real user monitoring fills in the coverage gap!

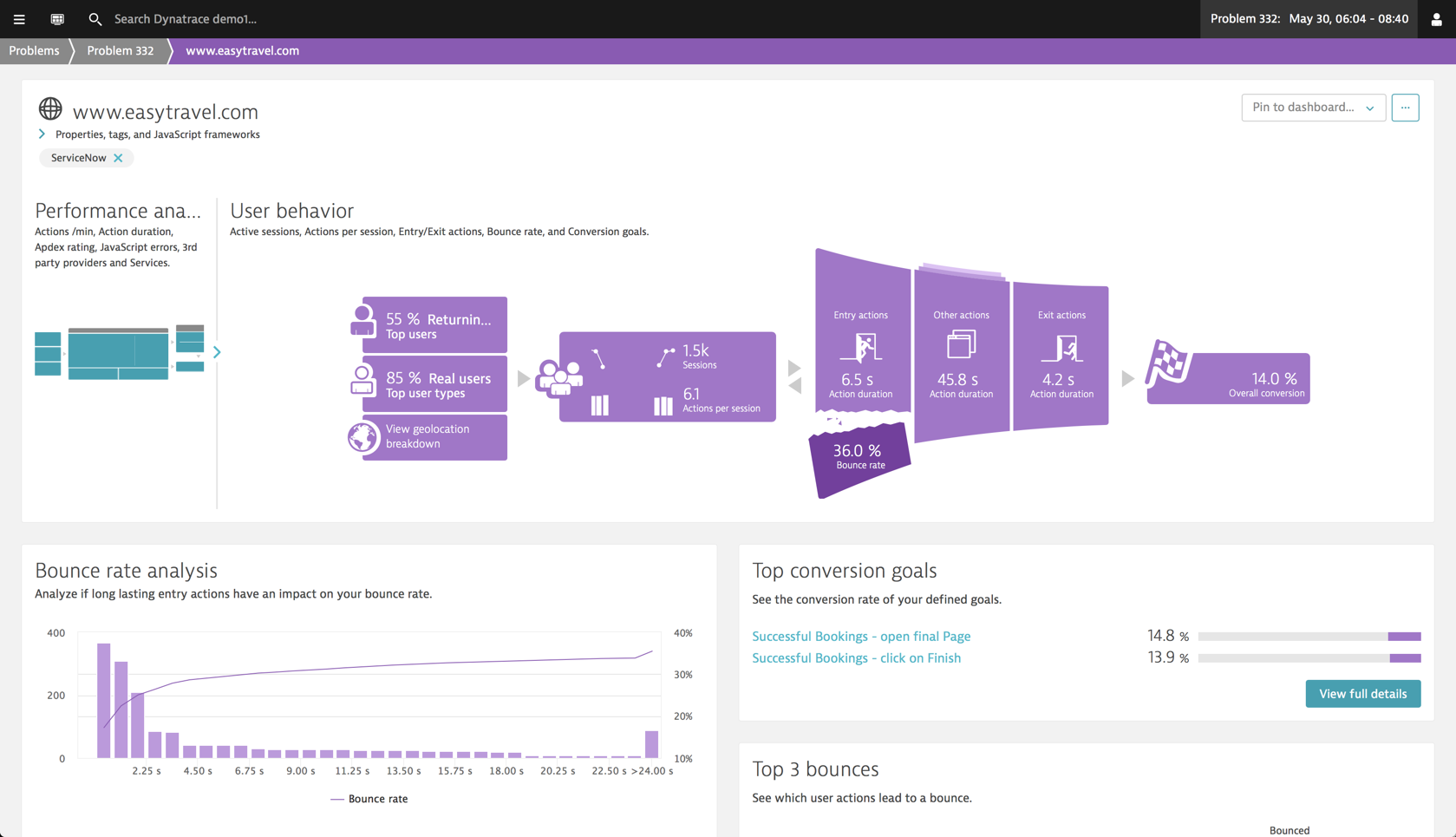

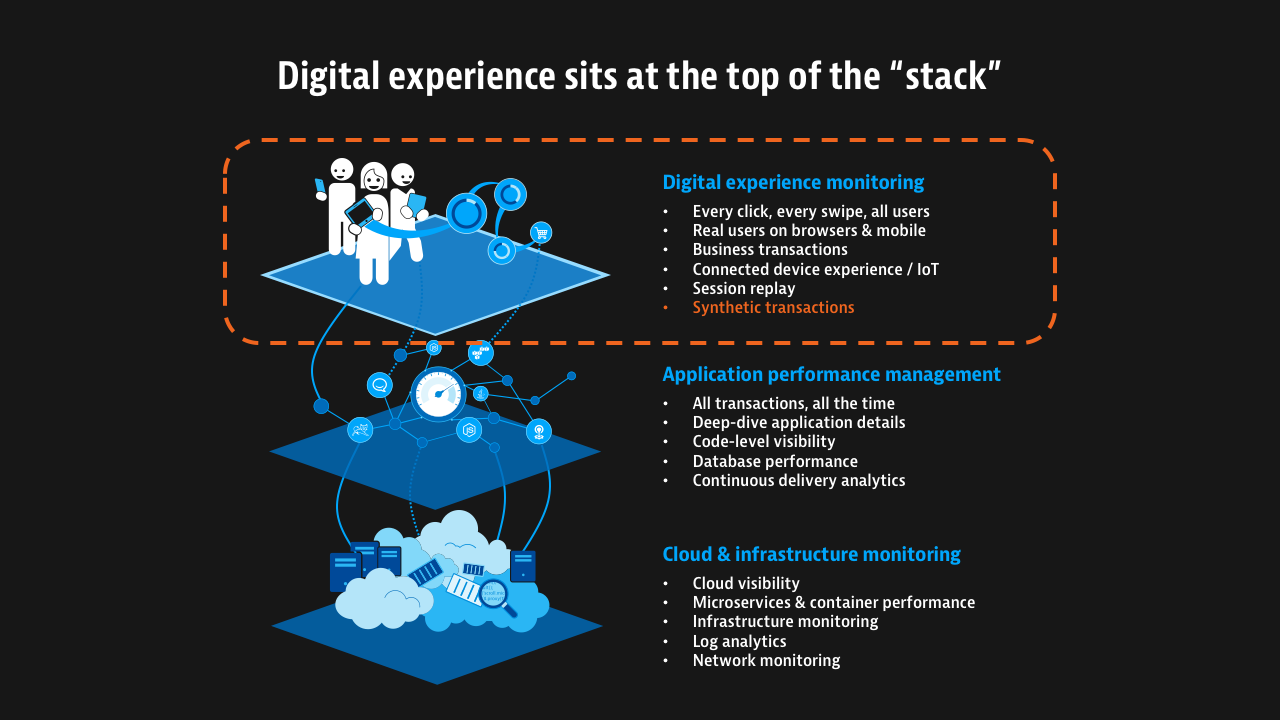

100% coverage of your apps, visitors, and journeys is possible with Real User Monitoring (RUM)! RUM has been around for years and Dynatrace has been a leader in providing this capability with our User Experience Management (UEM) product. However, with Dynatrace’s 3rd generation platform, RUM is tightly integrated with synthetic monitoring and our full-stack monitoring suite. In fact, as a core component of full-stack monitoring, it sits at the top of the stack and powers the most important part of our AI-based problem engine—are users being affected and if so, who are they? With this intelligence in hand, you are able to prioritize your response (by value, scope, geography, etc.) and more importantly, you can proactively engage your impacted users before they call you!

It is also possible to understand how performance issues influence the behavior of your customers (conversion drops, reduced activity, etc.) so you can take proactive actions within your application or business processes. (Google has a lot to say about how performance impacts conversions.) You can also validate whether the synthetic journeys you’ve set up are accurately reflecting what your users are doing in real life. Case-in-point: we had one automotive manufacturer learn they were not monitoring the number one entry point for their car configurator—a key conversion objective they wanted to monitor! With synthetic monitoring, your visibility is only as broad as the number of scripts you want to manage and your budget but making RUM a key part of your digital experience monitoring strategy can provide coverage where synthetic is blind. Bonus pitfall: traditional synthetic monitoring can leave you blind to your most important asset…your customers, but RUM can fill the gap!

This why Dynatrace set out to redefine monitoring. Monitoring logs, processes, network health, and code-level performance is great and super important…but all of this is meaningless if you don’t put the customer at the center of your monitoring strategy. Organizations using synthetic monitoring understand this and know the importance of monitoring the customer experience.

Unfortunately, with the rapid pace of software releases as well as dynamic applications and infrastructures, synthetic monitoring alone can not keep up. As more services and online activity move to mobile and IoT devices, the ability for synthetic monitoring to accurately and proactively alert you of user experience problems is getting more difficult—daresay impossible. Finally, synthetic monitoring has always had a difficult time monitoring dynamic web applications so most organizations limit their scripts to just a few key user journeys, hoping that this will be enough. All of this combined make it difficult to have full visibility into the health and performance your customer’s experience when synthetic monitoring is disconnected from the rest of your monitoring ecosystem…and why Dynatrace has done something about it!

Our new, all-in-one monitoring solution provides 100% coverage of your applications and users and gain new insights into your customers.

With Dynatrace, you can use a single platform to analyze application performance throughout your application’s full stack, down to each individual transaction across all layers and technologies—saving time and money by not having to jump between tools. Dynatrace’s modern approach to digital experience monitoring, tightly integrated with our application and infrastructure/cloud monitoring capabilities, means you can detect problems, understand who’s impacted, and automatically determine the root-cause so you can focus on your business and customers—not the monitoring tool.

Want to see it? Check out this recorded webinar I hosted this week.

If you are new to Dynatrace, I invite you to try it for free. If you are already a synthetic customer, contact your Account Executive or Customer Success Manager who can set you up with an expanded trial. Let’s do this and never get stuck in pitfalls or jungles again! (Looks like even Pitfall Harry has been able to escape with Activision pulling the game from the iOS App Store.)

Looking for answers?

Start a new discussion or ask for help in our Q&A forum.

Go to forum