A customer’s interaction with your online services sets the stage for their experience of doing business with you. If your digital experience is slow, unavailable, or difficult to work with, your risk of losing a customer to a competitor increases. Real user monitoring can help you catch these issues before they impact the bottom line.

Modern digital services are complex and will sometimes fail—that’s unavoidable. What you can avoid is having your customers discover those failures before you do.

What is real user monitoring?

Real user monitoring (RUM) collects detailed data about a user’s interaction with an application. This includes data collected on load actions can include navigation start, request start, and speed index metrics.

A user session, also known as a click path or user journey, is a sequence of actions a user takes while working with an application. User sessions can be highly varied, even within a single application. For example, one user may fill in several fields, click multiple buttons, and upload a file, while another may click several other buttons and fail to upload the file. With RUM, you can collect data on each user action within a session, including the time required to complete the action, so you can begin to identify patterns and see where to make improvements.

Ideally, a solution should record all user actions to have a complete picture of a user’s experience. However, only highly scalable real user monitoring solutions can collect data on all user actions, while less scalable tools have to sample user actions and make inferences from partial data.

How does real user monitoring work?

Real user monitoring works by injecting code into an application to capture metrics while the application is in use. Browser-based applications are monitored by injecting JavaScript code that can detect and track page loads as well as XHR requests, which change the UI without triggering a page load.

Native mobile applications are monitored by adding the monitoring library directly to your mobile application package. Monitoring data is streamed from the application to a data store where it can be queried and visualized. Some real user monitoring tools include automatic instrumentation that makes them very quick and easy to set up, whereas others require more involved manual configuration.

Real user monitoring vs synthetic monitoring

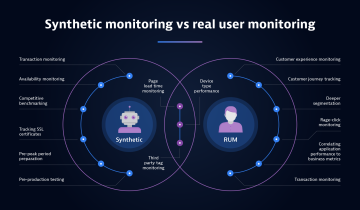

Real user monitoring is one of two kinds of user experience monitoring. The other is synthetic monitoring. Synthetic monitoring uses scripts to interact with an application instead of actual users.

RUM can capture all the nuances of your real users, providing a true picture into their experience, synthetic monitoring is great for proactive simulation and testing of the expected user experience.

Especially useful is the ability of synthetic monitoring to collect data about a specific metric at regular time intervals, such as page load time. IT teams can deploy synthetic agents in different geographical regions, so you can detect variations in application performance based on geolocation.

Synthetic monitoring is also useful for developing baselines of performance. Since the same kind of operation is performed consistently over time, you can collect sufficient data to determine an expected level of performance for a particular application and configuration.

Synthetic monitoring has different use cases from real user monitoring, but they both play an important role in application performance monitoring (APM). Especially when used together, RUM and synthetic monitoring provide comprehensive insight into the digital experience.

What are some examples of real user monitoring?

Virtually, any application with a user interface can benefit from regular real user monitoring. Some examples include:

- Monitoring a retailer’s online catalog to detect any increase in page load times

- Analyzing a clinician’s clickstream when using an electronic medical record system to better improve the efficiency of data entry

- Detecting differences in performance when an application is accessed by different kinds of mobile devices

- Tracking users’ paths through the conversion funnel and using that data for attributing revenue

- Providing insight into the service latency to help developers identify poorly performing code

What are the benefits and limitations of RUM?

Real user monitoring provides substantial benefits. The primary benefit of RUM is it provides insights into users’ actual experiences when interacting with an application. This can help to identify performance issues before too many users experience them. Teams can use data collected by RUM to verify service level agreements (SLAs) are being met. And UX designers can use that data to better understand how users interact with an application and how developers can streamline the interface. Increasingly, AI-powered RUM platforms go further — shifting teams from reactive issue detection to proactive prediction, flagging anomalies and likely failure points before users are affected.

There are also some limitations of real user monitoring. For example, because real user actions vary widely, it is difficult to use RUM to generate baselines of performance over time. Also, RUM generates large volumes of data, so it is important to have query and visualization tools that allow RUM users to quickly identify key information latent in the data.

It is also difficult to have adequate visibility into the context of the user’s experience in the moment. RUM solutions can benefit from the ability to have a movie-like replay to see exactly what the user does and understand what’s happening behind the scenes.

RUM depends on users actively engaging with a service. When there is low usage — for example, during off-hours — there is little RUM data to work with. Similarly, if you are about to deploy a new version of a service, you won’t have RUM data on the new version until customers start working with it. This can undermine one of the top reasons to monitor services, which is to help detect problems before your customers do. This is one area where synthetic monitoring can help to fill the gaps.

What are the common use cases for RUM?

RUM supports a wide range of observability and business goals across the application lifecycle:

- Release quality validation. Confirm that new deployments perform as expected in production, catching regressions in load times, error rates, or user flows before they reach a wide audience.

- Availability and performance monitoring. Continuously track whether your application is accessible and responsive across regions, devices, and network conditions — and get alerted when it isn't.

- Troubleshooting. When issues arise, RUM data, especially when combined with session replay and distributed tracing, gives teams the full context needed to identify root cause quickly.

- User satisfaction monitoring. Correlate performance metrics with satisfaction signals such as rage clicks, session abandonment, or voice-of-customer data to understand how technical experience drives user sentiment.

- Customer complaint resolution. Replay a specific user's session to see exactly what they encountered, turning vague support tickets into clear, reproducible evidence.

- User journey and conversion analysis. Track how users move through key flows, such as onboarding, checkout, form completion, and identify where friction or drop-off is costing you conversions.

- AI journey reliability and trust. As AI-powered features become embedded in applications, RUM extends to cover model latency, agent response times, and AI-driven UI flows, ensuring these interactions meet the same performance and reliability standards as the rest of the experience.

What are the best practices for implementing RUM?

To leverage the greatest benefits of real user monitoring, keep in mind several best practices.

- Establish business objectives for how you use RUM. These should be quantifiable goals that you can use data to help you achieve. For example, you may want to decrease abandoned carts by 10%. Having a specific goal like this in mind can help to focus development efforts and help to determine what aspects of user behavior to look at.

- Link RUM business objectives to technical goals. Technical goals should be able to quantify business goals. For example, RUM is often used to measure latency, and the relationship between longer latencies and user disengagement is well documented. When using RUM to measure technical performance, keep in mind how that ties back to overall business goals.

- Measure RUM for mobile and web-based apps. In addition, be sure to include mobile RUM as well as web-based RUM. The performance characteristics of mobile devices can vary widely. RUM can help you identify performance problems in mobile apps and devise ways to mitigate those issues.

- Integrate observability data and extend RUM to cover AI interactions. Integrating data from other observability telemetry, such as monitoring, logging, and distributed tracing, can help identify the root cause when problems do occur. And as AI-powered features become standard in applications, extend RUM to cover those interactions, too: model latency, agent response times, and AI-driven UI flows all affect the user experience.

What should you look for in a RUM solution?

When choosing a real user monitoring solution, maximize your value by looking for a solution that combines powerful RUM capabilities with an all-in-one observability platform. Observability gives you comprehensive front-to-back insight into what your users are experiencing and why it’s happening.

The optimal RUM solution is integrated with AI-powered distributed tracing that enables observability into complex, distributed cloud-native applications. Integration with backend data from monitoring and logging services lets you trace the root cause of problems in the user experience with infrastructure or application issues. This end-to-end observability enables you to quickly take action and deliver better digital experiences. Leading platforms now support agentic workflows, where AI can automatically investigate incidents, correlate signals, and trigger remediation, reducing the time from detection to resolution.

In addition, look for solutions that capture a comprehensive view of users’ sessions. Tools that only sample data can leave you with blind spots in your users’ experiences. Without sufficient coverage, sampling can miss instances of poor performance and could delay your time to detect a significant issue. Furthermore, the ability to enhance RUM data with business context like revenue and voice-of-customer data enables you to understand the business impact of application performance and user experience so you can drive better business outcomes.

A solution that also includes session replay capabilities allows you to understand the full context of a user’s actions. Session Replay creates video-like playbacks of a recorded user session, allowing for a level of visibility into user sessions that isn’t possible when viewing metrics out of context. Session Replay should support fast deployment life cycles and protected resources. Replay can help you understand the context of the user’s experience, which in turn can guide new features or performance improvements.

Real user monitoring with Dynatrace

Real user monitoring gives you visibility into what customers are actually experiencing. The real value comes from connecting those user sessions to the services, infrastructure, and code behind them – automatically.

Dynatrace delivers full-fidelity real user monitoring without sampling and correlates every interaction with distributed traces, logs, and cloud infrastructure data. With AI-driven analysis and Session Replay, teams can detect issues, understand root cause, and prioritize fixes based on real business impact.

With Dynatrace, you can go beyond monitoring user experience: you understand it in context.

FAQs: What is real user monitoring?

What is the difference between RUM and synthetic monitoring?

RUM captures data from actual users interacting with your application in real time, reflecting the true diversity of user behavior, devices, and network conditions. Synthetic monitoring, by contrast, uses scripted bots that simulate predefined user journeys at scheduled intervals. RUM is best for understanding real-world experience; synthetic monitoring is best for proactive testing, establishing baselines, and monitoring when real traffic is low.

How does RUM affect application performance?

When implemented correctly, RUM has a negligible impact on application performance. The JavaScript agents used for browser RUM are lightweight and asynchronous, meaning they do not block page rendering or user interactions. For mobile applications, the monitoring library adds minimal overhead.

What data does RUM collect?

RUM collects a wide range of metrics including page load times, resource timing (DNS, TCP, TTFB), JavaScript errors, user actions (clicks, form submissions, navigation), session duration, geographic location, device and browser information, and custom business events. It also captures Core Web Vitals, Google's standardized UX metrics, including Largest Contentful Paint (LCP), Interaction to Next Paint (INP), and Cumulative Layout Shift (CLS).

Is RUM data private and compliant with data regulations?

Yes. Leading RUM solutions, including Dynatrace, offer robust data privacy controls to ensure compliance with regulations such as GDPR, CCPA, and HIPAA. These controls include IP address masking, content masking in session replay, data residency options, and configurable data retention policies. Organizations can choose exactly what data is captured and how it is stored.

Can RUM integrate with other observability tools?

Absolutely. RUM data is most powerful when combined with backend observability — including distributed tracing, log analytics, and infrastructure monitoring. Dynatrace’s platform unifies RUM with full-stack observability, AI-driven root cause analysis (Davis AI), and business analytics, giving teams a single source of truth from the browser all the way to the database.