Provisioning, updating, monitoring and decommissioning thousands of computing endpoints like virtual machines (vms), containers, bare metal, etc. is no longer possible with manual processes and instead we should look at all these components and processes as code.

We’re no longer living in an age where large companies require only physical servers, with similar and rarely changing configurations, that could be manually maintained in a single Datacenter.

We’re currently in a technological era where we have a large variety of computing endpoints at our disposal like containers, Platform as a Service (PaaS), serverless, virtual machines, APIs, etc. with more being added continually. And with today’s increasing financial, availability, performance, and innovation requirements meaning applications need to be geographically dispersed to constantly changing dynamic powerhouses, it has become simply not possible to provision, update, monitor, and decommission them by only leveraging manual processes.

And, this is even more apparent due to the ever-increasing infrastructure complexity enterprises are dealing with.

At Dynatrace we believe that monitoring and performance should be automated processes that can be treated as code without any manual intervention. And, applying the “Everything as Code” principles can greatly help achieve that.

What is “Everything as Code”?

Everything as Code (EaC) can be described as a methodology or practice that extends the idea of how applications are treated as code and applies these concepts to all other IT components like operating systems, network configurations, and pipelines.

The current state of tools

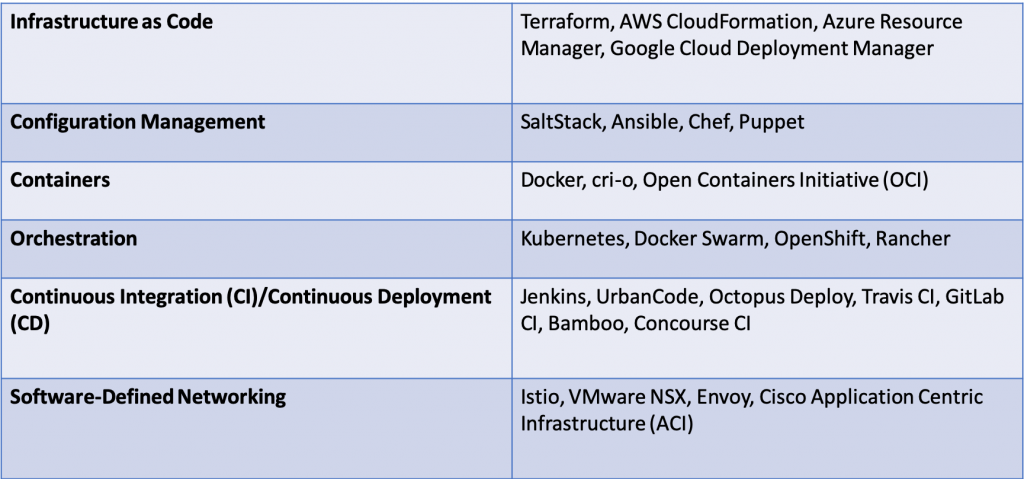

It’s important to note that the “Everything as Code” methodology covers a wide variety of tools, many of which are open-source, including Infrastructure as Code, configuration management, operations, security, and policies, which can each be leveraged for transforming to Everything as Code. Treating these different processes as code will ensure that best practices are followed. Some of the most popular tools, as listed, can be broken down into the following categories:

Each of these tools is there for us to embrace and transition to Everything as Code. But to reach this, a cross-team cultural mind-shift is imperative.

Once the respective teams are on board and ready to follow these principles, the operations processes can be taken to the next level of efficiency. This will allow teams to start worrying about other things that have not been solved yet by the extensive tools already available.

Benefits of Everything as Code

Using Everything as Code principles have an extensive amount of benefits, including:

- Knowledge and clear understanding of the environment: Have complete insight into your infrastructure, configurations, and policies without the need to rely on manually updated documents or network diagrams.

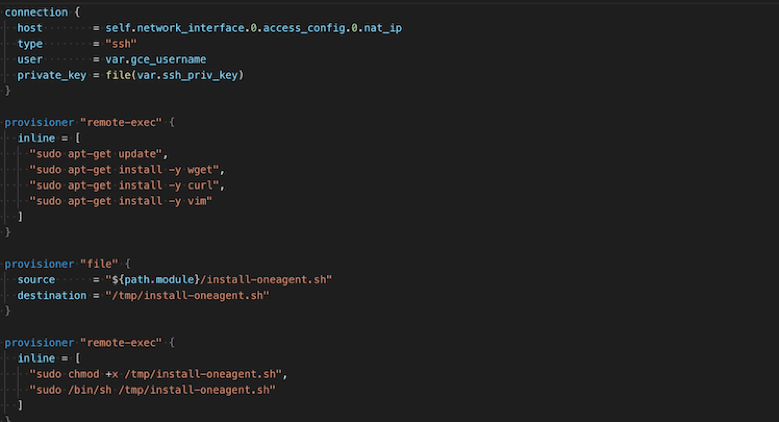

- Automated Monitoring: Applying the Everything as Code methodology to monitoring will result in the ability to automatically deploy and update components like the OneAgent and ActiveGate without the need for any manual intervention.

- On-demand infrastructure: The ability to deploy infrastructure whenever it’s required. This infrastructure can be integrated into a DevOps pipeline to dynamically build and destroy environments as the pipeline executes.

- GitOps: This model provides a bridge for development and production where Git is the source of truth all code can be analyzed for potential errors (linting), compared, tested and validated, giving teams more visibility, higher collaboration, and an overall better experience. Since code is now version controlled, rollbacks should be easy to apply.

- Consistency: Migrations, deployments and configuration changes should be simple and easy to replicate. Setting up deployment on a specific cloud provider or an environment will result in the same configuration as if the same deployment was targeted towards a different cloud provider or another environment.

“Everything as Code” methodology in Dynatrace

At Dynatrace we embrace Everything as Code, starting with our own culture and processes, in which we use a wide variety of tools that allow teams to keep as much as possible codified. We went from managing five EC2 instances in 2011 to around 1,000 in 2017. It was clear that in order to be successful in a changing digital world we needed to transition to using code for our operations instead of just doing it manually.

These tools and principles helped us build our SaaS solution in the Amazon Web Services (AWS) cloud. Now, we believe that operations should be as automated as possible and this has led to significantly lower downtime. Now our teams can put more focus creating fires that we can prepare for, instead of putting them out after they happen.

Many of our principles are based on Autonomous Cloud Management (ACM) which is a methodology built around Everything as Code. It focuses on transforming from DevOps to NoOps, as well as automation and leveraging code for automated deployments and configuration management of the OneAgent, ActiveGate, and Managed Clusters. But we don’t just stop at automated monitoring – we also offer the tools to codify automated performance quality gates into your pipelines using Keptn Pitometer.

At Dynatrace, we understand how critical monitoring can be and how changes in its configuration can cause significant problems, especially in production environments. We wanted to take this a step further and apply the Everything as Code methodology to monitoring configurations as well. For this reason, we’ve developed the Configuration API which allows Dynatrace users to manage their monitoring setup using the Dynatrace API.

Interested in next steps?

There are a range of AI Ops next-generation technologies that will start becoming more popular and help manage operations, monitoring and security more efficiently and in an even more automated fashion. Our AI engine, Davis®, makes Dynatrace a successful next-generation technology and is enhanced with advanced root-cause analysis capabilities and automated dependency detection.

And, shifting to the Everything as Code methodology sooner rather than later will help your organization prepare for these newer technologies. Welcoming these principles will not only bring an extensive amount of benefits to your organization, but it will allow you to stay up to date with the industry, and most importantly provide a better experience to both your employees and customers.

If you’d like to learn more about how we apply the Everything as Code principle, you can check out our Autonomous Cloud Enablement in which we cover the different ACM building blocks that explain how you can use and apply many of the principles that Everything as Code offers and help your organization through the journey of Digital Transformation.

Start a free trial!

Dynatrace is free to use for 15 days! The trial stops automatically, no credit card is required. Just enter your email address, choose your cloud location and install our agent.

Looking for answers?

Start a new discussion or ask for help in our Q&A forum.

Go to forum