What is a canary deployment?

A canary deployment is a deployment strategy where new software changes are gradually rolled out to a small subset of users to test for any issues or bugs before a full deployment is done, reducing the risk of major issues affecting all users.

Keep reading

BLOG POSTUnderstanding continuous integration and continuous delivery (CI/CD)

BLOG POSTUnderstanding continuous integration and continuous delivery (CI/CD) eBookUnpacking DevOps and platform engineering in a cloud-native world

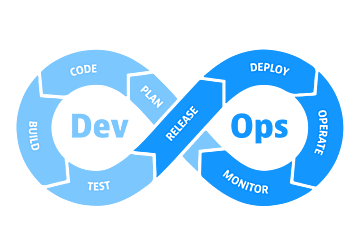

eBookUnpacking DevOps and platform engineering in a cloud-native world Blog postWhat is DevOps?

Blog postWhat is DevOps? EBOOKThe Developer’s Guide to Observability

EBOOKThe Developer’s Guide to Observability

Explore the ways observability supports secure, efficient cloud-native development.