Smart baselining automatically learns "normal" performance

As OneAgent discovers and collects metrics from all components through your entire application topology, artificial intelligence automatically learns how your application performs under load and what constitutes normal performance.

- Zero manual configuration: auto-baselining starts immediately out of the box, without any need to verify thresholds, define rules, or adjust default settings.

- Baselines auto-adapt dynamically as your environment changes.

- Smart baselining results in 90% fewer alerts while still detecting significant anomalies in less than a minute.

- Reduce alarm fatigue, "alert spamming," and manual tuning of alerting rules.

Beware of common baselining pitfalls

Traditional baselining approaches rely on standard deviations, averages, and/or transaction samples to determine normal performance.

- Averages are ineffective because they are too simplistic and one-dimensional. They mask underlying issues by "flattening" performance spikes and dips.

- Static standard deviation formulas work only when things looks like a bell curve, which is rarely the case—this leads to false positives.

This is how most APM companies establish performance baselines.

The far more accurate, more dynamic, more useful approach is to use percentiles.

- Looking at percentiles (median and slowest 10%) tells you what's really going on: how most users are actually experiencing your application and site.

- Outliers don't skew baseline thresholds—so you don't get false positives.

This is how Dynatrace determines performance baselines.

For further information

Learn more about why averages are not the right approach for application monitoring in this blog post from Michael Kopp

How does auto-baselining work?

Dynatrace doesn’t rely on static thresholds to identify when something isn’t the way it’s supposed to be. Instead, we use fully automatic and dynamic multidimensional baselining.

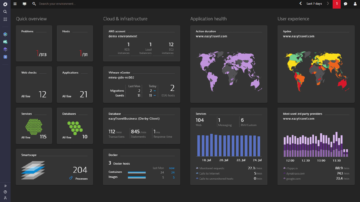

Basically that means we use artificial intelligence to pin down all the baseline metrics related to the performance of your applications, services, and infrastructure—from back end through user experience at the browser level.

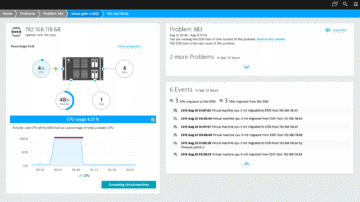

- Machine learning algorithms automatically understand statistical characteristics of response times, failure rates, and throughput.

- Advanced statistical models analyze application behavior, applying different strategies to different metrics.

- Artificial intelligence builds baseline cubes that comprise statistically meaningful combinations of over 10,000 cells of multidimensional reference values across 5 different dimensions: user action, geolocation, browser, operating system, and service method.

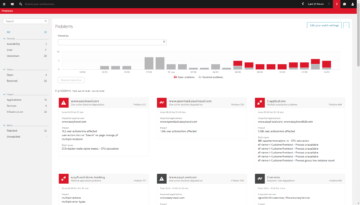

Such intelligent and automatic baselining allows Dynatrace to detect anomalies at a highly granular level and to notify you of problems in real time.

Never adjust your monitoring configuration again

Correctly setting up alert thresholds is crucial to effective application performance monitoring. But that can be a lot of time-consuming and potentially error-prone manual effort with traditional APM tools—especially given how fast your infrastructure changes in modern dynamic environments.

- Dynatrace is smart enough to dynamically adjust to changes in your infrastructure or traffic patterns.

- Never worry about adjusting your dashboards or monitoring configurations again.

- Multidimensional baselining cubes are calculated daily, so you always have up-to-date performance thresholds.

Try it free