As data growth continues, so do threats against data security, which makes data privacy by design even more critical. Here's what to consider.

Creating an ecosystem that facilitates data security and data privacy by design can be difficult, but it’s critical to securing information.

When organizations focus on data privacy by design, they build security considerations into cloud systems upfront rather than as a bolt-on consideration. Practices include continuous security testing, promoting a mature DevSecOps culture, and more.

Because there is a direct link between mastery of data and the ability to protect it, many enterprises have necessarily become data brokers. Enterprise data stores grow with the promise of analytics and the use of data to enable behavioral security solutions, cognitive analytics, and monitoring and supervision.

As data growth continues, so do threats against data security, which makes data privacy by design even more critical. For example, some security incidents have had a widespread, costly impact. Consider Log4Shell, a software vulnerability in Apache Log4j 2, a popular Java library. Log4j is a ubiquitous software code in various consumer-facing products and services.

Discovered in 2021, Log4Shell affected millions of applications and devices, with an average remediation cost of $90,000. Organizations need greater transparency and visibility into core multi- and hybrid cloud environments to combat these challenges. Modern observability technologies have helped enterprises identify software vulnerabilities such as Log4Shell in their environments. Additionally, modern observability enables organizations to reduce the time it takes to identify these software vulnerabilities from weeks or months to hours or days.

During a Perform 2023 panel discussion, Dynatrace colleagues Donald Ferguson, principal product manager, and Michael Plank, senior product manager, addressed how the Dynatrace platform uses data security and data privacy by design to keep data safer than storing it anywhere else. Further, their conversation explored the topic of control over and transparency of the use of data.

Owning data and creating data privacy by design

Ferguson and Plank focused on two key messages as they discussed data security:

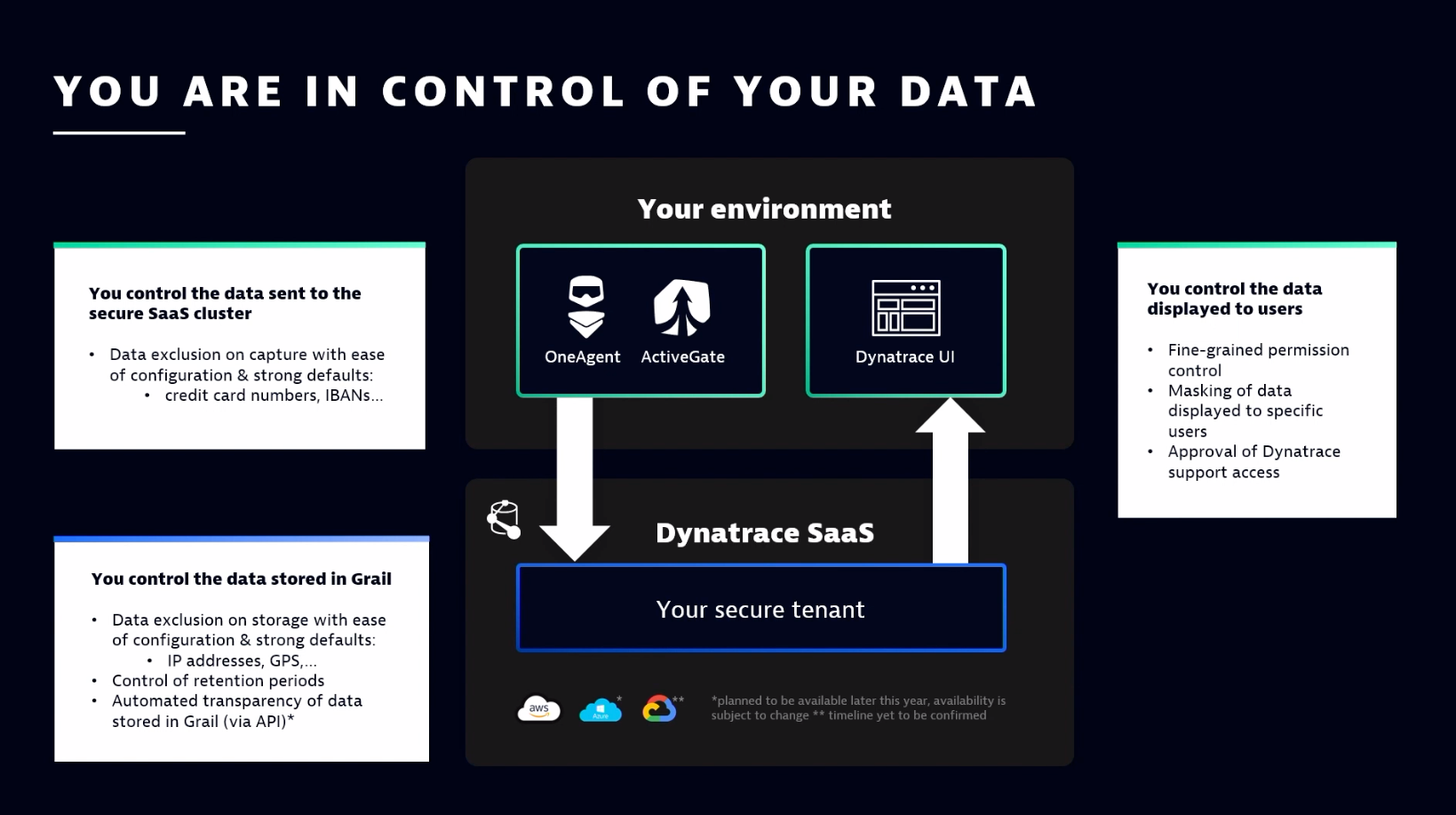

- Organizations always choose and control the data they process with Dynatrace.

- Dynatrace protects data above industry standards.

Focusing on the first key message, Ferguson touched on complete control and which data is captured. “With OneAgent [a binary file that includes a set of specialized services that have been configured specifically for a monitoring environment], you choose which data is being captured by the OneAgent,” he said. “You exclude certain types of data with easy configuration and strong defaults. For example, credit card numbers are excluded by default.”

Once data reaches an organization’s secure tenant in the software as a service (SaaS) cluster, teams can “also can exclude certain types of data with ease of configuration and strong defaults at storage in Grail [the Dynatrace data lakehouse that houses data],” added Ferguson.

Why perform exclusion at two points? Because data is analyzed and assessed after it’s sent to the SaaS cluster. “The data is enriched, and a lot of value is created for the customer before the data is even stored in Grail,” Ferguson noted. “So, you can get the full value of that data as it is sent over to your secure tenant without the original data having to be stored at all.”

This data is excluded from storage, but teams can still gain value from data enrichment beforehand. “This is a key differentiator because the original data isn’t stored at all, but you still get all the value,” Ferguson added. “In addition, you control the retention periods in Grail, and, coming soon, you’ll have the ability through API [application programming interface] calls to have automated transparency in exactly what’s happening with your data, including confirmation that the exclusion of certain data is happening exactly as you would expect it to happen.”

Defaulting to data privacy by design

How teams secure data end to end is also critical. Plank discussed the nature of end-to-end security.

One key aspect of creating data privacy by design is to create an ecosystem that’s secure by default. Leaders should consider the following when creating end-to-end security architecture to support data security:

- Non-root mode

- Automatic signature verification

- Automatic (and manual as needed) updates

- Automatic authentication of the environment

Plank also noted secure access for data, using single sign-on and IP-based access restrictions.

The underlying software architecture that supports all this data must be secure, as well. “All these listed components are all built with the Dynatrace Secure Software Development Lifecycle,” Plank explained. “And this is an independently audited, pen-tested, continuously evolving program that we have in place, and we have publicly shared all of the information on how we secure the development and operations of our software.”

Understanding tenant security

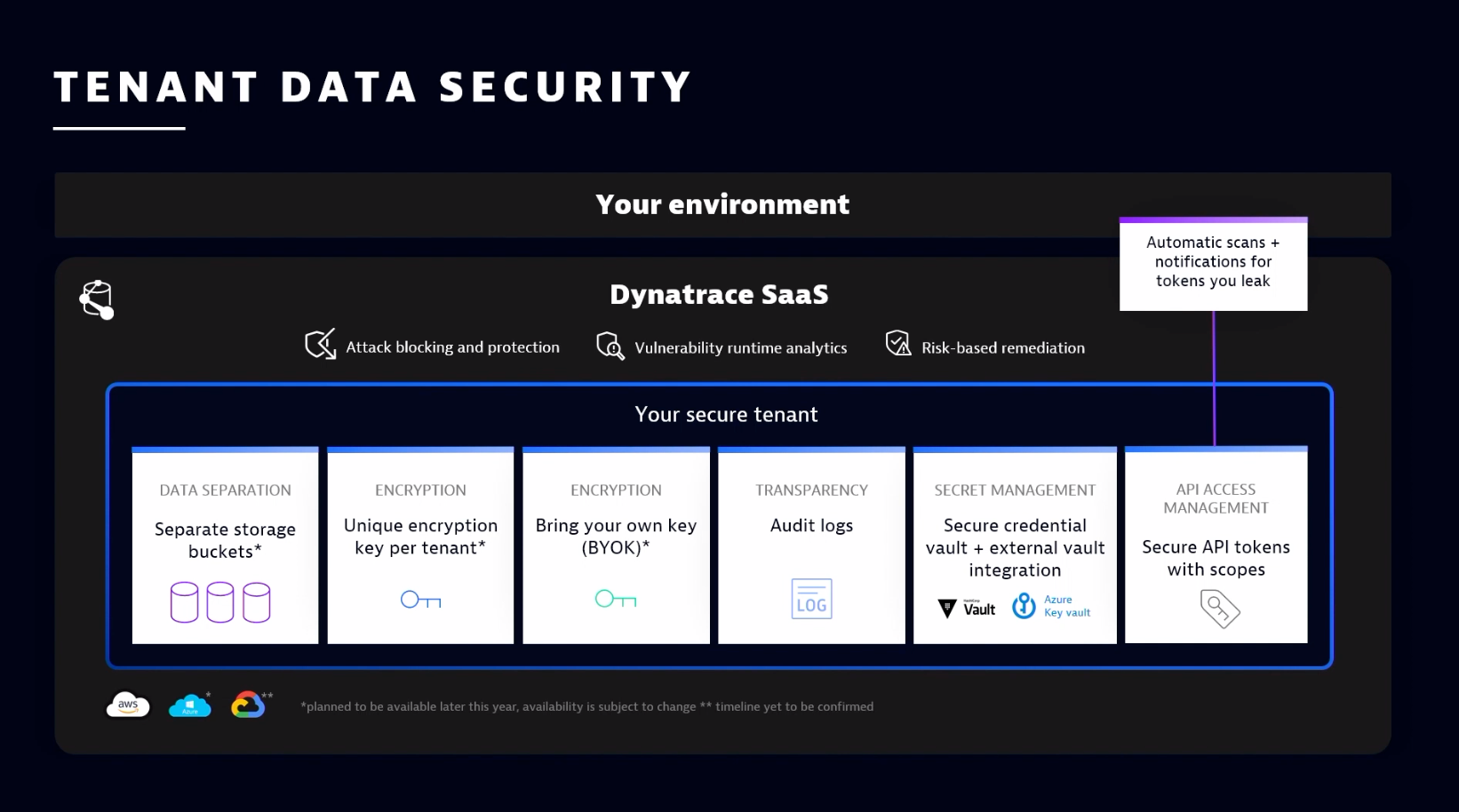

Ferguson and Plank explained how data security is designed within the tenant. The Dynatrace SaaS platform ensures security with six key steps:

- Data separation. Data is segmented and separated based on storage buckets. This gives users granular control of how and where their data is distributed and used.

- Encryption. Each tenant has an encryption key, ensuring unique tenant security functions.

- More encryption. Sometimes customers want to “own the keys to the kingdom.” In these situations, tenants can bring their keys for further encryption management.

- Transparency. Through the audit logs, organizations can see all history points and all activities happening within their environment and with their data.

- Secret management. Organizations can manage secrets and tokens with a built-in secure credential vault or leverage an external credential key vault to the Dynatrace ecosystem.

- API access management. Everything is available through a REST API (application programming interface), but access to that API must be secure. Within Dynatrace, organizations can see exactly what the API token is doing, who has access to it, and how to secure it further.

On that last point, Plank discussed a scenario where an API token gets leaked, whether accidentally or maliciously. “We have you covered there,” he explained. “Dynatrace has integrated with systems like GitHub, so the client will get notified immediately if an API token shows up there. From there, Dynatrace will work with you to rotate the token [replacing the authentication token with a new one and either retiring or expiring the old one] to ensure nothing malicious happens.”

Putting it all together to strengthen data security

While applications might not be difficult to develop, ensuring data and app security is critical. During their wrap-up, Ferguson and Plank discussed how working with a Secure Software Development Lifecycle is essential. Before Dynatrace releases an app to the hub, the app goes through an extensive security review, including vulnerability, penetration, and threat detection.

Because of this approach, Dynatrace holds several designations regarding compliance, certifications, and audits. Dynatrace is SOC 2 Type 2-certified, and Dynatrace accords with compliance regulations outlined in the General Data Protection Regulation (Europe). The company performs regular self-assessments (see the Cloud Security Alliance CAIQ report) and conducts penetration tests with independent security firms.

Additionally, Dynatrace offers a FedRAMP-authorized deployment option available in the FedRAMP marketplace. It also benefits from Amazon’s and Azure’s secure, world-class data centers certified for ISO 27001, PCI-DSS Level 1, and SOC 1/SSAE-16.

Finally, as it relates to the session, organizations can enable the FIPS mode to ensure OneAgent is compliant with the FIPS 140-3 computer security standard.

Hoping to adopt these security protocols within your organization? Learn more by checking out the full session: “Ensure best-in-class data security and privacy with Dynatrace SaaS.”

Looking for answers?

Start a new discussion or ask for help in our Q&A forum.

Go to forum