What is Tomcat?

Apache Tomcat is an open-source web server developed by the Apache Software Foundation (ASF) and one of the most popular servlet containers available today. It’s an incredibly lightweight, flexible and extremely stable platform to build on.

Dynatrace monitors and analyzes the activity of your Tomcat servers, providing visibility down to individual database statements.

Gain visibility into your Java applications running on Tomcat

Dynatrace monitors and analyzes the database activities of your Java applications running on Tomcat, providing you with visibility all the way down to individual SQL and NoSQL statements. Just a few of the performance metrics you will see on your Dynatrace dashboard when monitoring Tomcat:

- JVM metrics

- Custom JMX metrics

- Garbage collection metrics

- All database statements

- All requests

- Suspension rate

- All dependencies

In under five minutes the Dynatrace OneAgent automatically discovers your entire Java application running on Tomcat.

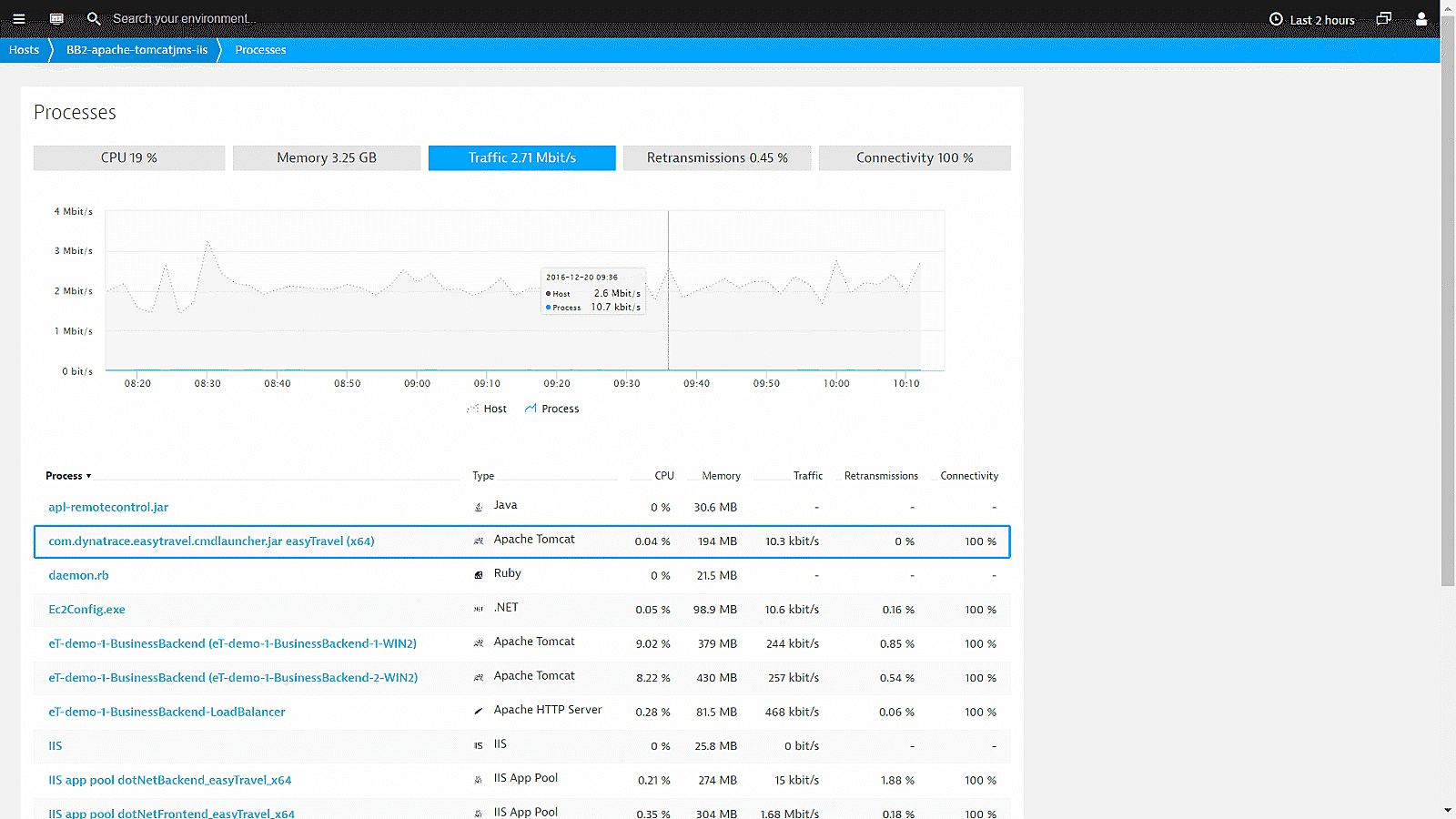

Start Tomcat monitoring in under 5 minutes!

In under five minutes, Dynatrace shows you your Tomcat servers’ CPU, memory, and network health metrics all the way down to the process level.

- Manual configuration of your monitoring setup is no longer necessary.

- Auto-detection starts monitoring new virtual machines as they are deployed.

- Dynatrace is the only solution that shows you process-specific network metrics.

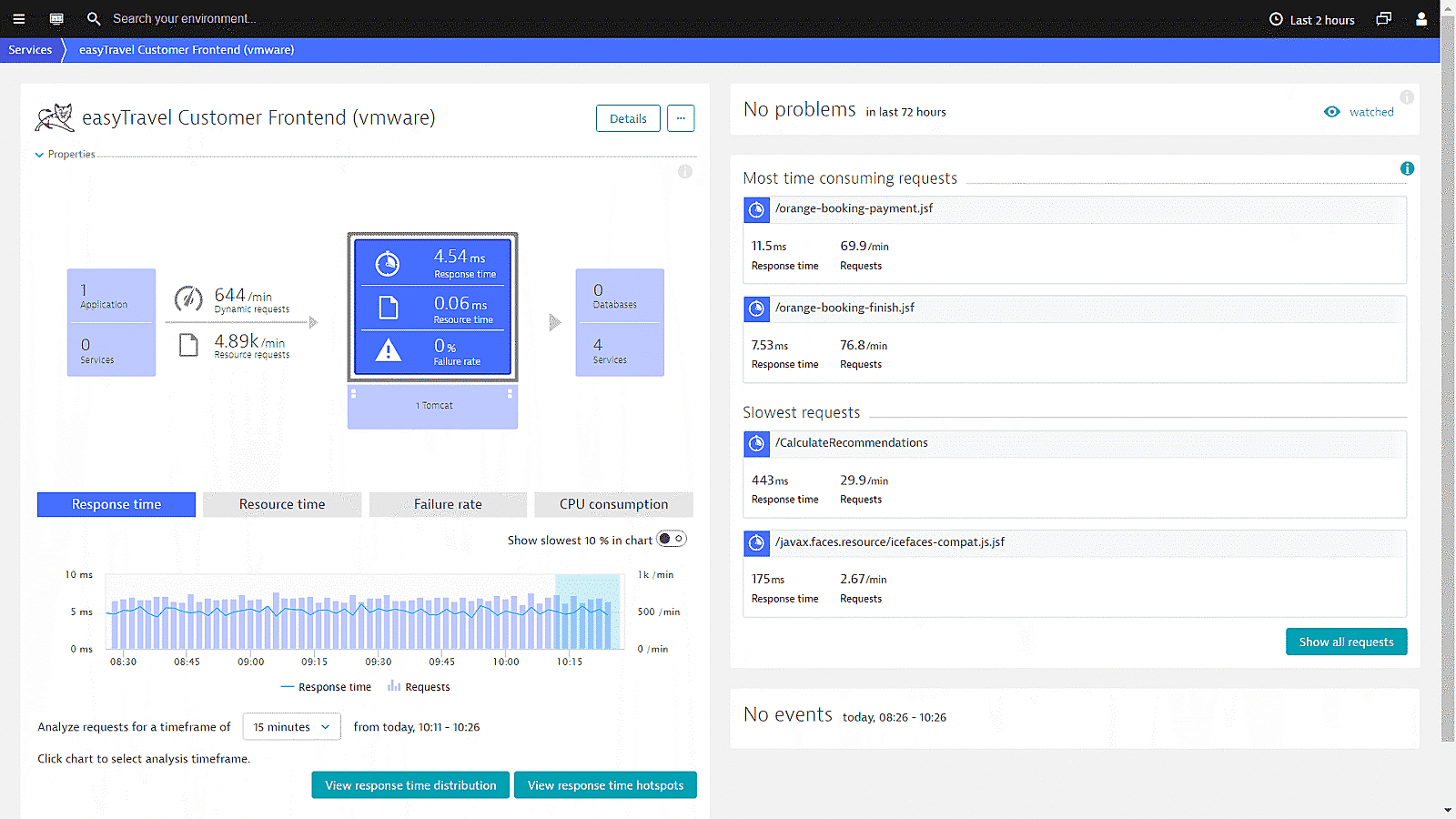

All Tomcat service metrics at a glance

Find out everything about your Tomcat services: incoming and outgoing requests, response time, resource time and failure rate.

From this view you can easily drill down to the response time hotspots, where you find key performance metrics regarding:

- interaction with other services and queues

- database usage

- service execution

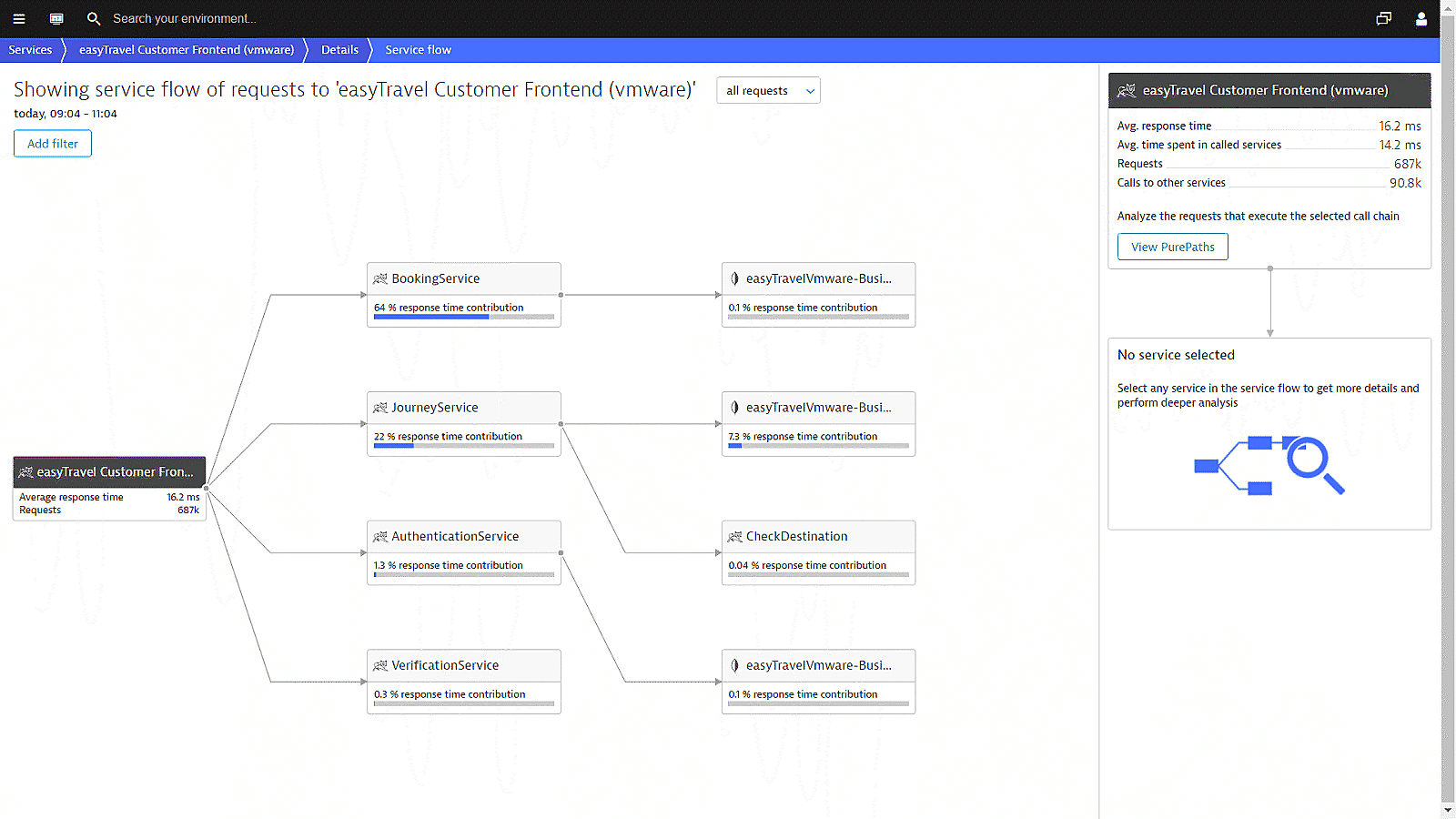

Visualize Tomcat service requests end-to-end

Dynatrace understands your applications’ transactions from end-to-end. Service flow shows the actual execution of each individual service and service-request type. While Smartscape shows you your overall environment topology, Service flow provides you with the view point of a single service or service-request type.

From this view you can easily drill down to the PurePaths and analyze the requests that execute the selected call chain.

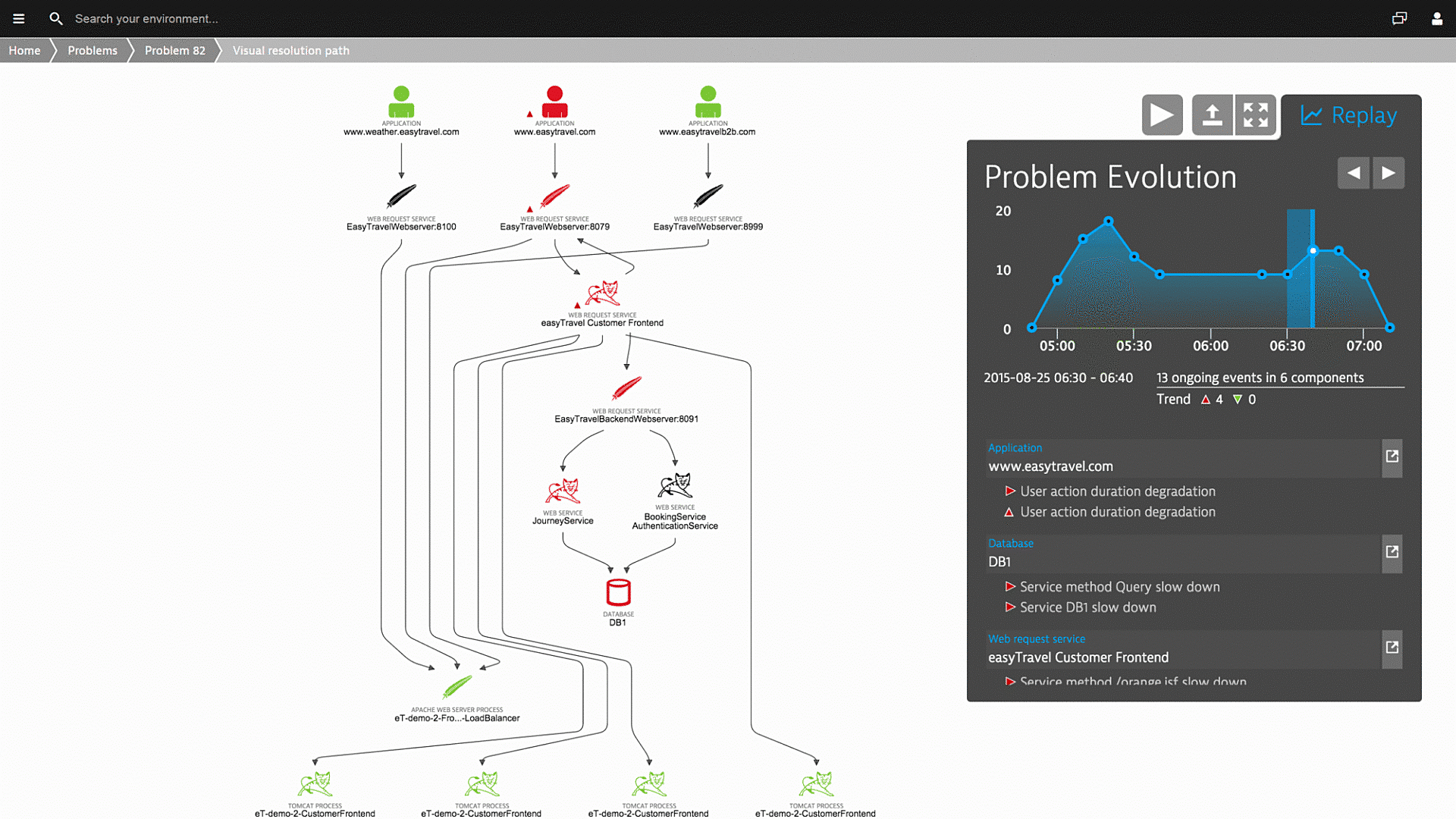

Understand the impact of issues on customer experience

While monitoring your Tomcat servers, Dynatrace learns the details of your entire application architecture automatically.

- Artificial intelligence automatically identifies the dependencies within your environment.

- Dynatrace detects and analyzes availability and performance problems across your entire technology stack.

- Visualize how problems evolve and how they impact the user experience.

We’re intrigued by its capability to work almost out of the box as well as being able to monitor system aspects as well as application performance and user experience.

Start monitoring your Tomcat server with Dynatrace!

Sign up, deploy our agent and get unmatched insights out-of-the-box.