Software is taking over the world

As a result, every business needs to embrace software as a core competency to ensure survival and prosperity. However, transforming into a software company is a significant task—building and running software today is harder than ever. And if you think it’s hard now, remember, you are just at the beginning of your journey.

Speed and scale: a double-edged sword

You invested in Microsoft Azure to build and run your software at a speed and scale that will transform your business—that’s where Azure excels. But are you prepared for the complexity that comes with speed and scale? As software development transitions to a cloud native approach that employs microservices, containers, and software-defined cloud infrastructure, the complexity you will experience in the immediate future is more immense than the human mind can envision.

You also invested in monitoring tools. Lots of them over the years. But your traditional monitoring tools don’t work in this new dynamic world of speed and scale that Azure enables. That’s why many analysts and industry leaders predict that more than 50% of enterprises will entirely replace their traditional monitoring tools in the next few years.

Which brings us to why we’ve written this guide. We understand how important your software is, and why choosing the right monitoring platform is mandatory if you want to live by speed and scale, and not die by speed and scale.

We worked with your industry peers to arrive at our insights and conclusions

Dynatrace works with the world’s most recognized brands, helping to automate their operations and release better software faster. We have experience monitoring the largest Azure implementations, helping enterprises manage the significant complexity challenges of speed and scale. Some examples include:

- A large retailer managing 2,000,000 transactions a second

- An airline with 9,200 agents on 550 hosts capturing 300,000 measurements per minute and more than 3,000,000 events per minute

- A large health insurer with 2,200 agents on 350 hosts, with 900,000 events per minute and 200,000 measures per minute

Read on to reveal five critical factors that dictate the right monitoring platform for Microsoft Azure.

At Dynatrace, we experienced our own transformation—embracing cloud, automation, containers, microservices, and NoOps. We saw the shift early on and transitioned from delivery software through a traditional on-premise model to the successful hybrid-SaaS innovator it is today. Read the Game changing – From zero to DevOps cloud in 80 days-brief to learn more, too.

Hybrid, multi-cloud is the norm

Insight

Enterprises are rapidly adopting cloud infrastructure as a service (IaaS), platform as a service (PaaS), and function as a service (FaaS) to increase agility and accelerate innovation. Cloud adoption is so widespread that hybrid multi-cloud is now the norm. According to RightScale, 81% of enterprises are executing a multi-cloud strategy.

Hybrid cloud

As enterprises migrate applications to the cloud or build new cloud-native applications, they also maintain traditional applications and infrastructure. Over time, this balance will shift from the traditional tech stack to the new stack, but both new and old will continue to coexist and interact.

Multi-cloud

Different cloud platforms have different features and benefits, technologies, levels of abstraction, prices, and geographic footprints. Each of these differences make them suitable for specific services. Enterprises started with a single cloud provider but quickly embraced multiple clouds, resulting in highly distributed application and infrastructure architectures.

Challenge

The result of hybrid multi-cloud is bimodal IT—the practice of building and running two distinctly different application and infrastructure environments. Enterprises need to continue to enhance and maintain existing relatively static environments while also building and running new applications- scalable, dynamic software defined infrastructure in the cloud.

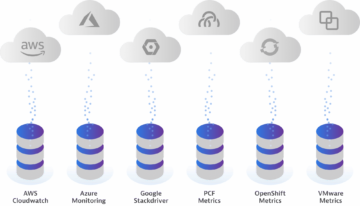

Putting traditional IT to one side for a moment to focus solely on multi- cloud platforms, the frequent output is monitoring-tool proliferation resulting from teams operating in siloes despite critical interdependencies between services running across clouds.

The challenge of multiple monitoring tools across clouds is further compounded when we bring traditional IT back into focus, and the need to monitor and manage a range of existing technologies that also have service interdependencies with cloud environments.

Key consideration

Simplicity and cost saving drove early cloud adoption. But today, cloud use has evolved into a complex and dynamic landscape that spans multiple clouds as well as traditional on-premises technologies. The ability to seamlessly monitor the full technology stack across multiple clouds while also monitoring traditional on-premises technology stacks is critical to automating operations, no matter how highly distributed the applications and infrastructure being monitored.

Microservices and containers introduce speed

Insight

Microservices and containers are revolutionizing the way applications are built and deployed, providing tremendous benefits in terms of speed, agility, and scale. In fact, 98% of enterprise development teams expect microservices to become their default architecture, and IDC predicts that by 2022, 90% of all apps will feature a microservices architecture.

Challenge

Seventy-two percent of CIOs say that monitoring containerized microservices in real time is almost impossible. Moving to microservices running in containers makes it harder to get visibility into environments. Each container acts like a tiny server, multiplying the number of points you need to monitor. They live, scale, and die based on health and demand. As enterprises scales their Azure environments from on-premises to cloud to multi-cloud, the number of dependencies and data generated increases exponentially, making it impossible to understand the system as a whole.

The traditional approach to instrumenting applications involves the manual deployment of multiple agents. When environments consist of thousands of containers with orchestrated scaling, manual instrumentation becomes impossible and severely limits the ability to innovate.

Key consideration

A manual approach to instrumenting, discovering, and monitoring microservices and containers will not work. For dynamic, scalable platforms like Azure, a fully automated approach to agent deployment and continuous discovery of containers and monitoring of the applications and services running within them is mandatory.

— Dynatrace CIO Complexity Report 2018

Not all AI is equal

Insights

Gartner predicts 30% of IT organizations that fail to adopt AI will no longer be operationally viable by 2022. As enterprises embrace a hybrid multi-cloud environment, the sheer volume of data created and the massive environmental complexity will make it impossible for humans to monitor, comprehend, and take action. This critical need for machines to solve data volume and speed challenges has resulted in Gartner creating a new category—AIOps (AI for IT operations).

Challenge

AI is a buzzword across many industries and making sense of the market noise is difficult. To help, here are three key AI use cases to keep in mind when considering how to monitor your Azure platform and applications:

Many enterprises are trying to address these use cases by adding an AIOps solution to the 10 to 25+ monitoring tools they already have. While this approach may have limited benefits, such as alert noise reduction, it will have minimal effectiveness addressing the root cause analysis and autoremediation use cases as it lacks contextual understanding of the data to draw meaningful conclusions.

You will also find there are many different approaches to AI. Here are a few of the more popular ones you are likely to encounter as you move towards an AIOps strategy:

Key consideration

Not all AI is created equal. Attempting to enhance existing monitoring tools with AI such as machine learning and anomaly-based AI, will provide limited value. AI needs to be inherent in all aspects of the monitoring platform and see everything in real time, including the topology of the architecture, dependencies, and service flow. AI should also be able to ingest additional data sources for inclusion in the AI algorithms, as opposed to correlating data via charts and graphs.

— Gartner

DevOps and Continuous Delivery: Innovation's soulmate

Insight

DevOps is perhaps the most critical consideration when maximizing investment in Azure and other cloud technologies. Implemented and executed correctly, DevOps enhances an enterprise’s ability to innovate with speed, scale, and agility. Research shows that high performing DevOps team have 46 times more frequent code deployments and 440 times faster lead time from commit to deploy.

Challenge

As enterprises scale DevOps across multiple teams, there will be hundreds or thousands of changes a day, resulting in code pushes every few minutes. While CI/CD tooling helps mitigate error-prone manual tasks through automated build, test, and deployment, bad code can still make it into production. The complexity of a highly-dynamic and distributed cloud environment like Azure, along with thousands of deployments a day, will only exacerbate this risk.

As the software stakes get higher, shifting performance checks left—that is, earlier in the pipeline– enabling faster feedback loops becomes critical. But it can’t be accomplished easily with a multi- tool approach to monitoring. To be effective, a monitoring solution needs to have a holistic view of every component, every change, and contextual understanding of the impact each change has on the system as a whole.

Key consideration

To go fast and not break things, automatic performance checks need to happen earlier in the pipeline. To achieve this, a monitoring solution should have tight integration with a wide range of DevOps tooling. And combined with the right AI, these integrations will also help support the move to AIOps and enable automated remediation to limit the impact of bad software releases.

Check to see which DevOps tooling a particular monitoring solution integrates with and supports, and consider how it will impact your ability to automate things in the future.

Digital experiences matter

Insight

Enterprises are striving to accelerate innovation without putting customer experiences at risk, but it’s not just traditional end-customer experiences of web and mobile apps at risk. Apps built on Azure support a broad range of services and audiences:

- The consumerization of IT has evolved to include wearables, smart homes, smart cars and life-critical health devices

- Corporate employees are increasingly working remotely and need access to systems that are in the corporate datacenter and cloud based

- Employees using office workspaces rely on smart office features for lighting, temperature, safety, and security, which are reliant on the emerging paradigm of machine-to-machine (M2M) communications (Internet of Things)

What was simply regarded as user experience has evolved and grown into digital experience, encapsulating end-users, employees, and IoT.

Challenge

Enterprise IT departments face mounting pressure to accelerate their speed of innovation, while user expectations for speed, usability, and availability of applications and services increases unabated. Combined with the explosion of IoT devices and the increasingly vast array of technologies involved, managing and optimizing digital experiences while embracing high frequency software release cycles and operating complex hybrid cloud environments presents significant challenges.

If digital experiences aren’t measured, how can enterprises prioritize and react when problems occur? Are they even aware there are problems? And if experiences are quantified, is it in the context of the supporting applications, services, and infrastructure that permit rapid root-cause analysis and remediation? Only enterprises able to deliver extraordinary digital customer experiences will stay relevant and prosper.

Key consideration

Enterprises need confidence that they’re delivering, or on the path to delivering, exceptional digital experiences in increasingly complex environments. To achieve this, they require real-time monitoring and 100% visibility across all types of customers, employees, and machine-based experiences. Key things to look for include:

— Dynatrace CIO Complexity Report 2018

Dynatrace helps you build better software faster

Our all-in-one platform provides intelligence into the performance of your apps, underlying infrastructure, experience of users, and more, so you can automate IT operations, release better software faster, and deliver unrivaled digital experiences.

Dynatrace + Microsoft Azure:

A powerful partnership

The result of that is 360° actionable monitoring with one-click deployment

Spend your time innovating, not monitoring

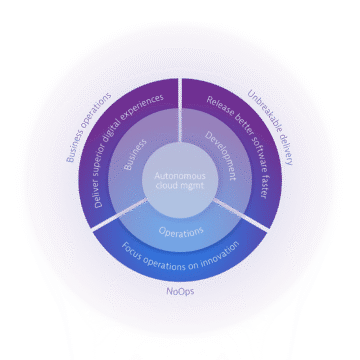

Leveraging Dynatrace enables enterprises to innovate faster, automate IT operations, and provide perfect software experiences to customers. Dynatrace is built to support innovation at scale, minimize risk, and reduce cloud complexity. Utilizing its AI capabilities, Dynatrace provides real-time, high-fidelity data to operations, development, and business teams.

This helps organizations lay the foundation for a more collaborative organizational structure. It opens the door to even greater agility and flexibility to innovate at scale through automation and autonomous cloud operations.

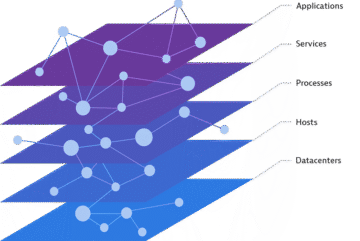

- AI-powered, answer-centric insights: Dynatrace’s Smartscape data model integrates to understand interconnections and interdependencies, yielding causation, not just correlation, and providing continuous and deterministic answers and insights

- Single-agent, fully automated platform: Self-learning management and optimization of all applications requires fewer resources and dramatically reduces total cost of ownership.

- Full-stack, all-in-one approach with deep cloud integration: Providing extensive integration across multi- cloud and dynamic microservices environments, leveraging OpenAPI Initiative to provide incremental context.

- Web-scale and enterprise grade: Dynatrace is built cloud-native to be highly scalable, available, and secure. Based on robust enterprise-proven cloud technologies, it scales to 100,000+ hosts easily.

- Flexible deployment options: Dynatrace provides a pure SaaS solution as well as a managed on-premises offering, combining the simplicity, software currency, and scalability of SaaS with the choice of keeping data on-premises.

Dynatrace by the numbers

Transform your digital business faster with software intelligence

With Dynatrace and Microsoft Azure, organizations can:

- Release better software, faster. Build an unbreakable delivery pipeline and enable self-healing, so you can focus on innovation, not troubleshooting.

- Automate and modernize cloud operations. Ensure cloud success while optimizing resources and rationalizing tools with automated, AI-powered monitoring.

- Deliver perfect software experiences. Ensure perfect experiences by seeing every customer’s journey from their perspective, in the context of your app and infrastructure performance.

Power and scale: Native Microsoft Azure integration

Dynatrace natively embeds OneAgent technology through Azure virtual machine extensions, making it the most powerful cloud monitoring solution available for Azure.

One-click deployment via the Azure portal or Azure’s Management REST API delivers the full picture of how Dynatrace builds on top of the productivity, intelligence, and hybrid capabilities of Azure. Mutual customers are supported with enhanced container and application performance monitoring across their organization.

A Leader in the 2025 Gartner® Magic Quadrant™ for Observability Platforms

Read the complimentary report to see why Gartner positioned us highest for Ability to Execute in the latest Magic Quadrant.

This graphic was published by Gartner, Inc. as part of a larger research document and should be evaluated in the context of the entire document. The Gartner document is available upon request from Dynatrace. Dynatrace was recognized as Compuware from 2010-2014.

Trusted by thousands of top global brands

Try it free