Combining operations and development to deliver continuous software improvement can reduce complexity and improve application output. Learn more about DevOps and best practices to achieve it at scale.

What is DevOps?

DevOps is a collection of flexible practices and processes organizations use to create and deliver applications and services by aligning and coordinating software development with IT operations.

As DevOps pioneer Patrick Debois first described it in 2009, DevOps is not a specific technology, but a tactical approach. By working together, development and operations teams can eliminate roadblocks and focus on improving how they create, deploy, and continuously monitor software.

The shift to DevOps is critical for organizations to support the ever-accelerating development speeds that customers and internal stakeholders demand. With the help of cloud-native technologies, open source solutions, and agile APIs, teams can now deliver and maintain code more efficiently than ever. Combining development with operations and the processes that support them enables organizations to keep pace with the speed of development.

The origin of DevOps

DevOps got its start in 2008 with developers Andrew Clay and Patrick Debois. Looking to overcome common issues in agile development — such as decreased collaboration as project timelines expand, and the negative impacts of incremental delivery on long-term outcomes — the pair proposed an alternative: continuous development and delivery in a combined DevOps pipeline. The term gained traction after DevOpsDays in 2009, quickly establishing itself as a new industry buzzword.

More than a decade later, it’s clear the DevOps framework is more than just hype. In practice, its biggest benefit isn’t a simple efficiency boost, but rather a cultural shift that fundamentally changes the way companies approach every stage of the software development process.

Recently, DevOps has undergone a more in-depth evolution thanks to the work of industry experts such as Gene Kim, keynote speaker at Perform 2021 and author of The DevOps Handbook and The Phoenix Project.

How does DevOps work?

Many organizations consolidate development and operations in a single team to achieve this combined process, organizing software delivery by feature rather than by job function. This approach encourages individuals to develop cross-functional skills, folding testing and application security practices into a seamless delivery lifecycle.

Implementing DevOps often goes hand in hand with continuous integration (CI), where multiple developers commit software updates to a shared repository, often many times a day. CI enables developers to discover integration issues and bugs earlier in the process and streamline code branches and builds.

With this holistic point of view, engineers can collaborate on common processes, such as defining service-level objectives (SLOs), testing, and quality gates that everyone can implement. A common set of standards and goals can streamline agile workflows and make it possible for teams to adopt a coordinated DevOps toolset so they can automate more processes in the software delivery lifecycle (SDLC).

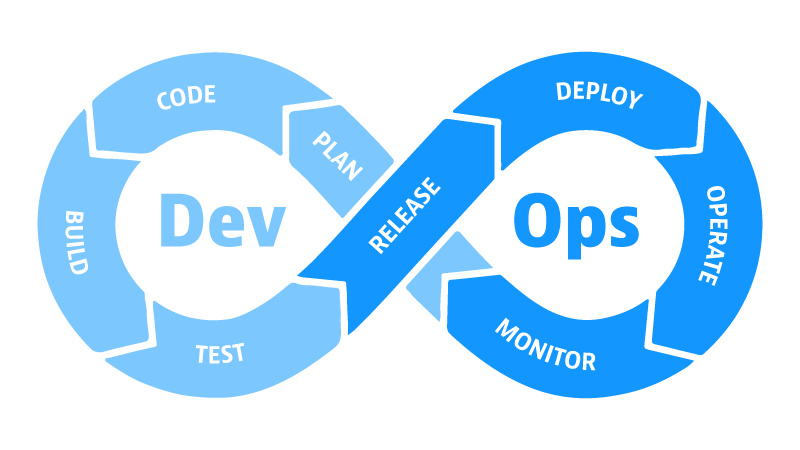

The DevOps lifecycle

Planning

This stage involves defining the project’s goals and creating a plan for how to achieve them. It also includes identifying the risks and challenges that may be encountered. The planning stage is essential for ensuring that the DevOps process is successful. Involve all stakeholders in the planning process, including developers, operations engineers, and business leaders. The plan should be clear, concise, and achievable.

Development

This stage is where the code is written and tested. Automate as much of the testing process as possible to ensure that the code is high quality and use a version control system to track changes to the code. The code should be unit-tested to ensure that it is working correctly.

Deployment

This stage is where the code is deployed to production. It is vital to have a deployment pipeline that automates the deployment process and minimizes the risk of errors.

Monitoring

This stage involves collecting data about the application’s performance and identifying problems. It is necessary to have a monitoring system in place that can alert the team to any issues as soon as possible.

Continuous improvement

This stage is ongoing and involves continuously evaluating the DevOps process and making improvements. Being open to feedback and willing to change the process as needed is important.

The benefits of DevOps

In practice, DevOps offers benefits not only to creating, delivering, and maintaining software, but to every process and stakeholder from early-stage proof-of-concept to digital business analytics and customer experience.

For development teams, the goal is to recognize the process of creating code as an ongoing cycle rather than a straight line. Partnering or integrating development with operations teams helps apply agile development principles — quick, bite-sized improvements based on priority — to the entire software lifecycle. This includes initial design, proof of concept, testing, deployment, and eventual revision.

This approach is especially important as customer needs and expectations from the C-suite intensify. Development teams tasked with producing and deploying software as quickly as possible are now capable of doing just that. Meanwhile, operations teams, understandably, have concerns about the impact of rapid-fire code implementation and the changes necessary for putting that code to work, reliably and at scale.

For operations, a collaborative approach to deployment makes it possible to extend agile processes past software into platforms and infrastructure to analyze details and context of all layers within the IT environment. By applying design thinking to delivery systems, operations teams can shift their focus from managing infrastructure to delivering outstanding user experiences.

In effect, this dev-and-ops effort looks to leverage rather than limit the impact of development on operations by applying software development principles to every aspect of IT — all while maintaining operational focus on standardization and security.

The challenges of DevOps

Here are some challenges that organizations may face as they adopt DevOps:

- Security concerns: DevOps practices can introduce new security risks, such as the risk of introducing vulnerabilities into production systems through automated deployments. Organizations must have a strong security culture and implement appropriate security controls to mitigate these risks.

- Organizational silos: DevOps can be challenging to implement in organizations with strong structural silos. These silos can create communication and collaboration challenges that can hinder the adoption of DevOps practices. Organizations must break down these silos and create a more collaborative culture to adopt DevOps successfully.

- Compliance challenges: DevOps practices might introduce new compliance challenges, such as the need to comply with regulations requiring manual approvals for production system changes. Organizations must assess their compliance requirements and implement appropriate controls to remain compliant.

How to adopt DevOps

By breaking down the silos between the software development and IT operations teams, DevOps can help organizations deliver software more quickly and reliably, for example:

Start with a small pilot project.

Don’t try to implement all the DevOps practices at once. Start with a small project that is important to the organization and has senior management’s support.

Get buy-in from all stakeholders.

DevOps is a cultural change and won’t be successful unless everyone is on board. Ensure you have the buy-in of all stakeholders, including developers, operations engineers, security professionals, and business leaders.

Use the right tools.

There are several tools available to help organizations adopt DevOps practices. These tools can help with CI/CD, monitoring, and security.

Measure and improve.

It is essential to measure the results of your DevOps efforts to see what is working and what is not. This information can then improve your processes and make them more efficient.

Be patient.

It takes time to implement DevOps practices and see results. Stay positive if you don’t see immediate results.

Practices within DevOps

Continuous Integration

- Continuous integration (CI) is the practice of merging all code changes into a central repository regularly. This helps to identify and fix errors early in the development process.

Continuous delivery

- Continuous delivery (CD) is automatically deploying code changes to production after they have been tested and approved. This helps to ensure that new features and bug fixes are available to users as quickly as possible.

Microservices

- Microservices is an architectural style that divides an application into small, independent services. This makes it easier to develop, test, and deploy applications.

Infrastructure as code

- Infrastructure as code (IaC) is the practice of managing infrastructure using code. This makes it easier to automate the deployment and provisioning of infrastructure.

Monitoring

- Monitoring is the practice of collecting and analyzing data about an application’s performance. This helps to identify and troubleshoot problems before they impact users.

Automation

- Automation is the practice of automating tasks, such as testing, deployment, and monitoring. This frees up time for developers and operations engineers to focus on more strategic tasks.

Collaboration

- Collaboration is the practice of working together effectively. This is essential for DevOps, as it requires close collaboration between developers and operations engineers. This means breaking down the silos between these two teams and creating a culture where everyone works toward the same goal.

What are DevOps best practices?

Integrating disciplines, tools, and processes across the organization requires planning and coordination. Here are some best practices organizations can follow to make DevOps an enterprise-wide success.

Augment the DevOps pipeline with AI

Every stage of the DevOps pipeline requires some amount of analysis to drive decisions, responses, and automation.

For example, precise AI-based analysis can drive decisions about whether to release a piece of software, and once the software is in production, indicate whether the release is performing as it should. Or, during failed test runs, AI can provide the exact root cause down to the details of the underlying code, so developers can quickly address and remediate errors.

The ability to analyze data accurately and reliably, and provide definitive answers makes it possible for teams to automate processes throughout the software delivery lifecycle. Reliable, AI-driven answers are crucial for rapid incident response and auto-remediation so teams can understand the context behind a failure or error.

This artificial intelligence for IT operations (AIOps) is becoming a widespread practice, especially as organizations adopt cloud-native infrastructure.

Shift-left service-level objectives (SLOs)

To ensure development teams and SREs are aligned on the same success criteria, they should evaluate production SLOs against pre-production environments. By expanding quality assurance to include prevention, detection, and recoverability using production-level criteria, teams can deliver software that meets user requirements, reduces error rates, and increases overall reliability and resilience. More importantly, the cost of fixing errors in pre-production is much lower than in production.

A continuous quality mindset enables teams to architect the entire SDLC for testing. This means testing all layers of the lifecycle. It also means developing and maintaining reliable test data and test environments that developers, SREs, and IT operations teams can use at every stage of development and delivery.

One way to automatically evaluate pre-production SLOs is by establishing quality gates. A quality gate helps teams determine whether a service meets all pre-defined quality criteria. Quality gates take in key service level indicators (SLIs) or monitoring metrics and evaluates them against the quality criteria set. Code progresses to the next stage of the lifecycle only if the services meet or exceed the quality criteria.

Automate all DevOps processes

Automating DevOps pipelines allows for iterative, incremental software updates to be deployed faster and more frequently. It provides tighter feedback loops between Dev and Ops teams so they can spend more time innovating instead of performing manual processes.

Automation is what helps teams scale DevOps from a lighthouse project to an essential practice across the entire IT estate. DevOps automation typically rings the continuous integration, continuous delivery (CI/CD) bell, but automating these foundational processes can extend well beyond developing code. More advanced organizations seek to automate all stages of the DevOps lifecycle including infrastructure provisioning, deployment, monitoring, testing, remediation, and so on.

Adopt cloud-native architecture

To deliver on DevOps potential, speed and agility are key. Adopting cloud-native technologies and architecture is the best way to deliver more and richer features faster, flexibly, and at scale. These technologies include container-based computing solutions, such as Kubernetes, and serverless platforms-as-a-service (PaaS), such as AWS Lambda, Google Cloud Platform, and Azure Functions. In these environments, software runs in immutable containers using resources as needed, a setup that lends itself well to infrastructure patterns that can be easily orchestrated and automated.

Cloud-native technologies make it easier for teams to apply agile software development practices to infrastructure management. This includes automating key tasks such as version control, unit testing, continuous delivery, operations functions, and problem remediation.

Integrate security practices for DevSecOps

The diversity and flexibility of cloud-native technologies also make securing applications against vulnerabilities more challenging. As mentioned earlier, integrating application security and vulnerability assessment into a DevOps workflow is a best practice that extends the benefits of AI-driven analysis and automation to securing applications.

By automating application security testing to continuously analyze applications, libraries, and code at runtime, teams can eliminate security blind spots and false-positive alerts. Adding security-related SLOs, testing, and quality gates into all phases of the delivery lifecycle enables teams to cultivate a security mindset that eliminates another silo and results in more secure software.

Adopt a platform-driven approach with self-service processes

Achieving widespread DevOps success requires a platform approach that can make it easier for organizations to effect structural changes that optimize how teams work. A key goal is to establish self-service processes for managing different types of testing, monitoring, alerting, CI/CD workflows, internal infrastructure and development environments, and public cloud infrastructure. When teams have access to reliable data and analysis, and individuals have more autonomy to rely on their own knowledge and experience, organizations can extend the value of DevOps to the whole enterprise.

With self-service access to APIs, tools, services, and support in a single platform that leverages AI and automation, teams have a single reliable source of knowledge and coordination. This enables teams to integrate and streamline their DevOps toolchains and processes so they can spend less time maintaining the infrastructure and more time innovating.

How can DevOps transform enterprises

A successful DevOps initiative is characterized by a culture of experimentation, risk, and trust — one in which constant feedback between all members is both welcomed and utilized. But culture alone isn’t enough to transform enterprise effort; teams also need the right technologies and DevOps software to get the job done.

As tools and technologies proliferate, a crucial capability is observability: the ability to instrument and monitor telemetry data from across the cloud-native environment. This includes metrics, logs, distributed traces, as well as data from user-experiences and the latest open-source standards to measure the health of applications and their supporting infrastructure at every stage of development.

According to a recent Gartner report, leaders should consider solutions during pre-production to maximize insight into app performance, service availability, and overall environmental health.

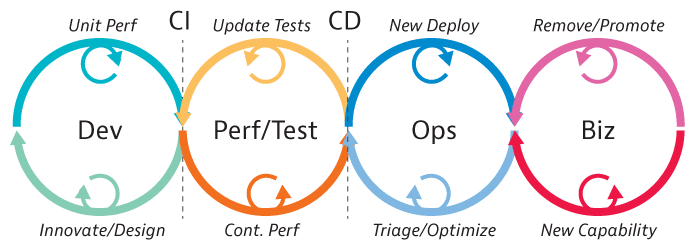

There’s also an emerging push to include more disciplines in the DevOps effort: “DevSecOps” teams seek to integrate security testing in delivery and deployment pipelines, while “BizDevOps” efforts strive to understand app performance from a user-experience perspective.

Although the advantages of DevOps are clear, the culture and technology shift it brings can make implementing DevOps solutions an iterative journey.

What is Observability in DevOps?

DevOps combines development and operations into a unified framework that breaks down silos and fosters whole-lifecycle collaboration. In this environment, SREs can implement operations that ensure software systems’ availability, latency, performance, and resiliency, and CI/CD practices can provide well-aligned and automated development, testing, delivery, and deployment.

How observability closes the DevOps gap

What is DevOps? It’s a cultural and tactical shift that closes the gap between development efforts and operational obligations by combining teamwork with technology to streamline software delivery, standardize testing and quality gates, and automate processes and incident response. Armed with best practices and an AI-driven software intelligence platform to manage the entire DevOps tool chain, teams can maximize efficiency, minimize error rates, and deliver on continuous delivery expectations.

Want to learn more about DevOps?

Read our DevOps eBook – Harmony in code: Unpacking DevOps and platform engineering in a cloud-native world

Looking for answers?

Start a new discussion or ask for help in our Q&A forum.

Go to forum