At Dynatrace we always focus on our users and how to solve their problems. We build features that our customers benefit from and use to simplify their work. Our goal is to keep the balance between adding new functionality and improving existing workflows while continuously providing our users with a simple and consistent experience. To improve the naming of our navigation menu entries, we will soon conduct A/B testing for one of the main entry points to the Dynatrace navigation menu.

Renaming is never easy

Imagine that you use a tool everyday and suddenly it’s replaced or named differently and you can’t find it. Although the intention behind the renaming may be sound, confusion or even frustration is inevitable at first (at least until the user becomes familiar with the new name).

We all constantly learn—sometimes on purpose, but most of the time, subconsciously. A complex digital platform may seem to be intuitive, but “intuitive” often means nothing more than relying on common patterns and navigation paradigms that are widely known or easy to learn. When behavior, naming, and “look and feel” are used consistently throughout a whole industry, they become industry standard and, eventually, a user expectation.

Does this mean that we shouldn’t try different terminology and navigation patterns and should just repeat whatever is industry standard?

Definitely not. Just because something is well known doesn’t mean that it’s good. The ultimate goal of digital platforms should be to create features that are easy to learn and comprehend.

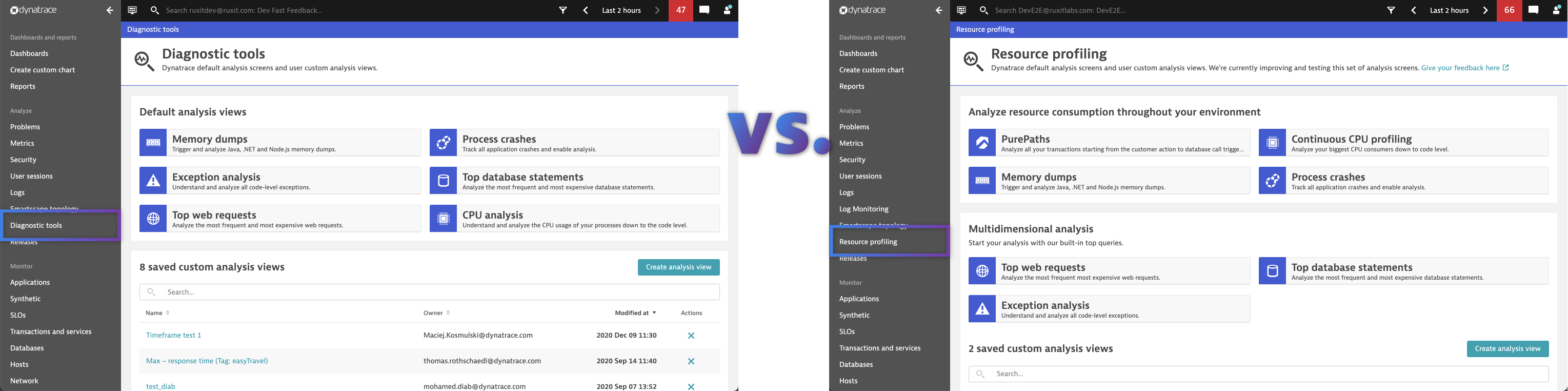

We recently spent a lot of time analyzing the Diagnostic tools section of our platform. In talking to customers internally and externally, we recognized that some of our analysis views, like the CPU analysis, are in fact ultimately what our users need, however they remain overlooked capabilities of our platform.

One of the reasons for this is the terminology, as it doesn’t tangibly explain the feature for the target audience. When testing it, one of our many findings was that developers and devops do not necessarily expect profiling to be included under ” diagnostic tools” as they are now.

Competitive research even shows that we’re underselling these product capabilities. Our CPU profiling is on 24/7, covers method hotspots, thread and memory allocation information, and allows users to analyze end-to-end traces down to the code level. Best of all, it’s included with our platform out of the box with no additional configuration or licensing required.

Sounds great right? So if it’s challenging for some users to discover this feature due to the current naming, we have a great opportunity to come up with a more meaningful and expressive name. Our hypothesis is, if we use the language of the addressed target audience, users will will better adopt the feature and it will ultimately help them in optimizing their resource usage.

A Dynatracer’s approach to renaming

Instead of guessing a good name and just renaming the menu entry point “Diagnostic tools” as well as the feature “CPU analysis” itself, we want to approach this matter carefully, with our users in mind.

So we started out with some brainstorming and wrote down ideas, discussion points, and expectations of customers with different experience levels and areas of expertise. Following this we categorized the suggestions into possibilities that match user expectations as well as our internal goals. With the names matching most categories we initiated a focus group. The discussion and exchanged opinions between the target audience, marketing, sales, and experience departments led to more intuitive menu entries.

A/B testing

The suggested terminology reflects the market standards, but also the target audience’s expectations. But our effort doesn’t end here. At Dynatrace, we want to make sure that we keep naming and terminology as clear and self-explanatory as possible. Therefore we want to test if the new terminology is easier to find for users and fulfills our assumptions and hypothesis.

Users need to be able to rely on patterns and consistency to improve their work efficiency. So before going ahead and changing the naming for all users, we will test it with a smaller number of customers.

For the A/B testing we conducted, we focused on environments with specific criteria:

- Creation date after July 2019

- Minimum of 80 unique users

- Paying accounts (because in the trial phase, a product is often not used regularly and the behavior of working with it often differs from a purchased product)

- .NET or Java footprint (as diagnostic tools are especially useful for these)

- Currently lower usage of diagnostic tools (< 500 visits) so as to not disturb frequent users

The environments will be divided into group A and group B with an equal amount of active users in both. Group A will work with the current terminology and structure, whereas group B will encounter different namings as well as a restructured overview page of analysis views, with the option to share their feedback and ideas for enhancements.

The defined metrics will show the success of this test.

We will conduct the A/B testing for 2 months and then, based on the data we gather, we will decide whether or not to roll this out to all environments. Your feedback is highly appreciated. Here’s the link to provide any feedback or contact us in case you want to join the A/B testing for diagnostic tools.

What’s next?

Stay tuned for even more improvements to diagnostic workflows in Dynatrace. We plan to power up our multidimensional analysis, add easy-to-find entry points to code-level insights that reduce the amount of drill downs required to analyze, and many more exciting enhancements to our platform’s diagnostic capabilities.

Looking for answers?

Start a new discussion or ask for help in our Q&A forum.

Go to forum