Introducing Dynatrace for AI, an open-source collection of agent skills and prompts that give any skills-compatible AI coding assistant the domain expertise it needs to work productively and accurately with Dynatrace.

If you’ve already wired an AI coding assistant up to Dynatrace, through the MCP server, the Dynatrace CLI (dtctl), or a custom agent you built yourself, you’ve seen your agent have difficulty interpreting data or calling for fields that don’t exist. This makes sense, your agent may be making assumptions based upon training that isn’t relevant. It lacks the skills to understand how to get the best value from Dynatrace. That is where Dynatrace for AI fills the gap.

What are agent skills?

Agent skills are an open format for packaging domain knowledge that AI agents can load on demand. A skill is a folder containing a SKILL.md file with focused instructions, examples, and optional reference material. Compatible agents, such as Claude Code, GitHub Copilot, Cursor, Cline, or others, discover installed skills and load the full content only when it’s relevant to the task at hand.

The net effect: you can install dozens of skills without bloating an agent’s context window. Agents pull in exactly what’s relevant when it’s relevant, and ignore the rest.

Built for agents working with Dynatrace

Dynatrace for AI is a curated set of skills that give an agent the three things it needs to efficiently do real work on Dynatrace:

- Access to Dynatrace data and insights: through DQL queries against Grail®, Smartscape® dependency graph, or problem records.

- Dynatrace expertise: the syntax rules, entity-model distinctions, and query patterns that separate a working query from one that looks correct but returns nothing.

- Task-level starting points: ready-made prompt templates for common engineering workflows, so teams don’t have to invent the approach from scratch.

Skills don’t connect to Dynatrace directly. You have to pair them with the MCP server or dtctl to perform live queries and initiate actions. Together, they turn an agent with generic observability intuition into one that easily extracts value from Dynatrace.

Complement your agent with domain expertise

The first release of Dynatrace for AI agent skills is focused on the workflows that engineering teams run every day:

- DQL fundamentals: covering the pipeline model, core data objects, and when to use fetch, timeseries, or smartscapeNodes to prevent failures that typically come from models trained on generic query-language data.

- Observability across the stack: services, traces, logs, frontends, and problems, each covering the entity model, key fields, and query patterns that make answers correct rather than merely plausible.

- Infrastructure and cloud: covering Kubernetes, AWS, and hosts.

- Platform tasks worth delegating: providing programmatic creation of dashboards and notebooks

Prompt templates for common workflows

Alongside the skills, the repo hosts a small set of prompt templates you can use as structured starting points to invoke the right skills for specific tasks. These save teams from having to design their approach from scratch and make outcomes more consistent across agents and users.

Current templates include:

- Performance regression: walks the agent through comparing RED metrics before and after a deployment, correlating any regression with distributed traces, and summarizing the root cause.

- Daily standup: pulls the last 24 hours of problems, deployment activity, and notable anomalies for a team’s services, so anyone can walk into a standup with the relevant production context already framed.

- Troubleshoot a problem: takes a problem ID and guides the agent through root-cause analysis, including affected entities, correlated events, relevant logs and traces, and creates a structured summary for the incident channel.

These are a starting point, not a ceiling, designed to be forked and shaped to your team’s on-call runbooks.

What Dynatrace for AI is and what it isn’t

Skills and prompts are a knowledge and workflow layer. They don’t connect to your Dynatrace environment, define what actions your agent can take, or set guardrails. That’s the job of the tool you pair them with and your Dynatrace permission model.

The quality of what your agent can produce also depends on the entities your environment is instrumented to capture. Skills help agents ask better questions of data, but they don’t control what data is collected.

Think of this skill as onboarding a smart new hire who already knows software, but needs to learn your platform. The skills are the platform user guide; your observability data is the work itself.

Get started

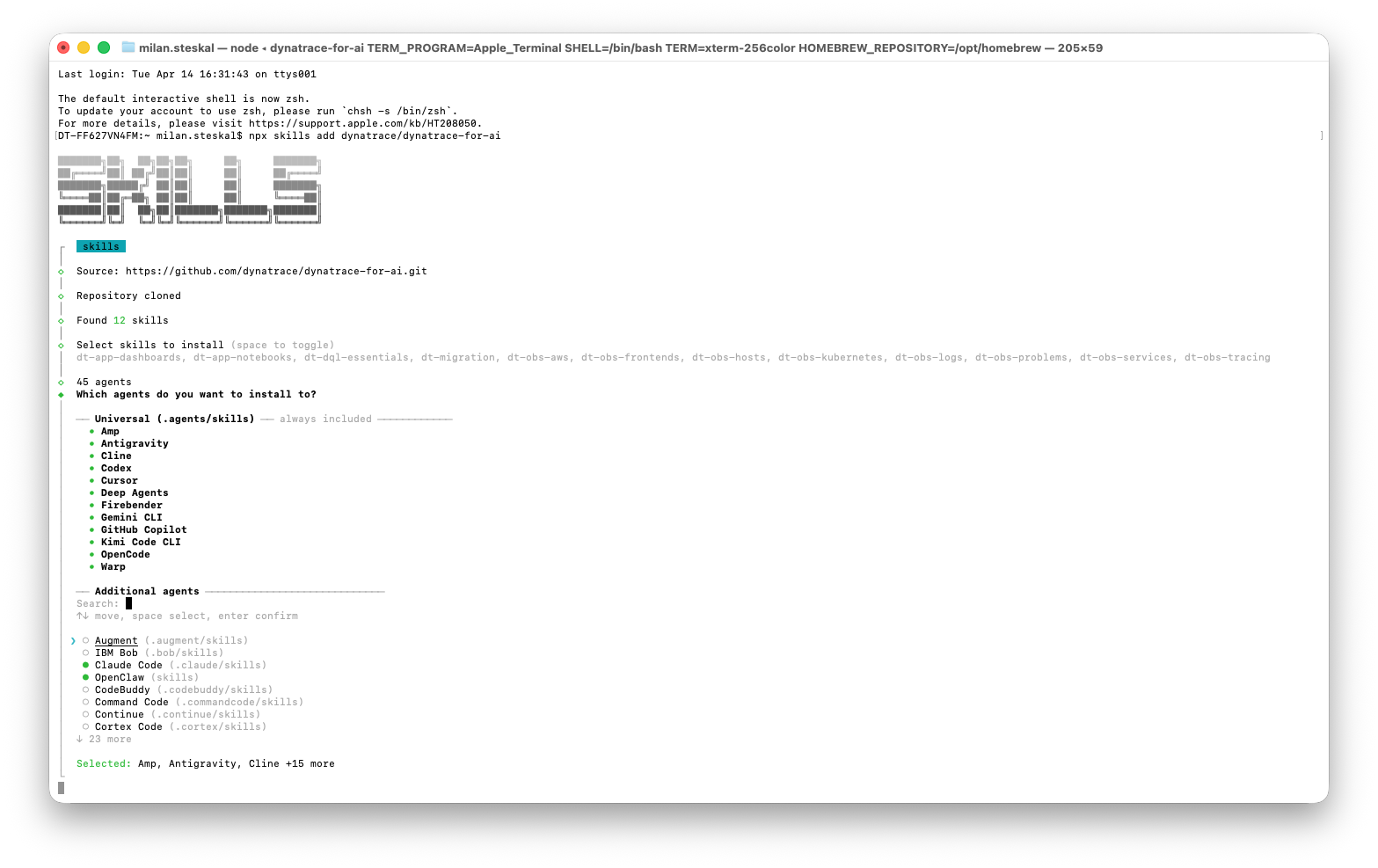

It’s super simple to install the skills and prompts in one go. Just run:

npx skills add dynatrace/dynatrace-for-ai…or activate the skills as a Claude Code plugin:

claude plugin marketplace add dynatrace/dynatrace-for-ai

claude plugin install dynatrace@dynatrace-for-aiMake sure your agent can reach Dynatrace, then try a real agent-skill task. A few good example starting prompts:

- “Compare the error rate of the checkout service over the last hour vs the same hour yesterday.”

- “Are any pods in the production namespace restarting or getting OOM-killed right now?”

- “Use the performance-regression prompt to check the deployment I just shipped.”

The difference in output quality is immediate: fewer corrections, cleaner queries, and answers that accurately reflect how Dynatrace continuously models your environment in real-time.

The Dynatrace for AI project is open source and actively developed. Issues, discussions, and pull requests are all welcome, especially from teams running agent skills against real workloads. We’d love to hear from you.

Make your agents work smarter; teach them how to use Dynatrace.

Looking for answers?

Start a new discussion or ask for help in our Q&A forum.

Go to forum