Dynatrace now supports SwiftUI, .NET MAUI, and Jetpack Compose for mobile app monitoring, allowing developers to quickly identify errors and focus on delivering the best user experience.

More development teams across enterprises are adopting new mobile UI frameworks, namely SwiftUI, .NET MAUI, and Android’s latest toolkit, Jetpack Compose. While these frameworks use a declarative syntax to simplify the codebase and expedite development lifecycles, they also introduce new challenges in monitoring the user experience of mobile apps. As a front-runner for auto-instrumentation in the observability space, Dynatrace has rolled out support for these technologies to expand its coverage for mobile applications. With mobile RUM, developers and their teams who have migrated their codebase to these new UI frameworks can take advantage of the benefits of the Dynatrace platform, which enable them to quickly identify errors and facilitate their immediate resolution. This allows developers to focus more of their efforts on innovation and delivering the best user experience to their customers.

High consumer expectations for mobile

In today’s digital world, mobile apps have become an essential part of our daily lives. We use mobile apps to communicate, entertain us, conduct business, shop, and much more—on the go, anytime and anywhere. As people typically spend 4.8 hours a day on their mobile devices, it’s natural that they demand a flawless experience. Among all digital channels, mobile has the lowest tolerance for bad experiences. If an app is slow, slightly buggy, or doesn’t fulfill the user’s needs promptly, it gets deleted. In many cases, an alternative app is installed. Overall, 52% of users feel frustrated by their experiences with mobile apps.

New development frameworks from the key players

Apple, Google, and Microsoft, among others, are heavily invested in development tools and frameworks. Backed by strong communities, these tools are continuously enhanced and have redefined how apps are built. Incorporating the latest advancements in technology allows companies to develop and deliver more reliable applications, resulting in smoother user experiences. To ensure consistent progress in app development, it’s crucial to stay updated and integrate these innovations into your development process.

With the introduction of Jetpack Compose and SwiftUI, the development of mobile apps has become more accessible, efficient, and streamlined. These frameworks are based on declarative syntax, which allows developers to build native UI for Android and iOS, respectively, with ease and speed. As a result, modern mobile apps increasingly use Jetpack Compose and SwiftUI to deliver excellent user experiences. According to Google’s recent announcements, approximately 23% of the top 1,000 Android apps are built with Jetpack Compose, and these numbers doubled from last year.

The importance of observability for mobile app development

An essential aspect of improving the user experience on mobile is observability. In order to make informed decisions, business owners, UX designers, and developers require data—specifically, they rely on insights from their data on a large scale, ideally in a single location. Providers of mobile apps must be able to identify issues that users are experiencing quickly so that they can address them. They also need to assess and optimize the performance of both their apps and the back end services that support them. Finally, by gaining complete visibility into user behavior, app providers can improve user journeys and achieve better outcomes for their businesses.

A key aspect of observability is the monitoring agent that a mobile app is instrumented with. This is important because the data collected—including both its breadth, depth, and semantics—play a significant role in determining the value that can be derived from automated or ad hoc analysis.

Mobile observability challenges

Mobile agents are included and shipped with their respective mobile apps, making the selection of the right agent and its configuration crucial. Frequent changes necessitate the publication of new app versions, and successful updates are dependent on users manually updating their devices. Therefore, anything that is not monitored accurately or is monitored incorrectly leads to lengthy cycles of roll-out and adoption until accurate data and answers can be obtained.

Furthermore, companies often require time from mobile app development teams to add dependencies to their agents and configure them. However, the primary goal of these teams is to develop new features and improve existing ones, which means that monitoring user experience is often a secondary concern. Therefore, it’s crucial to minimize the time and complexity required to set up a mobile agent.

Modern development frameworks pose unique challenges when it comes to monitoring. Jetpack Compose and SwiftUI, in particular, allow developers to create UI components using declarative programming. With this approach, a developer describes the desired end result and lets the framework figure out how to update the user interface. This approach simplifies development but introduces new challenges in detecting and relating user interactions with corresponding functions and context. Recognizing on which screen a particular interaction happened and providing human-readable names automatically is challenging. However, such automation is key to ensuring that developers spend less time instrumenting their apps (and that Dynatrace users can more easily make informed decisions based on analyzed data).

Dynatrace extends auto-instrumentation capabilities

The Dynatrace platform offers the best observability coupled with the least required effort for monitoring your mobile channels. Dynatrace boasts industry-leading auto-instrumentation and auto-capture of Real User Monitoring data, regardless of whether it’s for troubleshooting, performance monitoring, or optimizing user experience and business outcomes. With auto-instrumentation, Dynatrace tackles the aforementioned challenges, offering a high level of visibility into the user experience of your mobile app with no manual effort.

Based on feedback from our development community and customers, we have expanded our auto-instrumentation support to include the following technologies:

- Jetpack Compose

- SwiftUI

- .NET MAUI

Dynatrace OneAgent® now automatically captures user interactions, related web requests, crashes, and other vital app lifecycle information when these technologies are in use (detailed functional scope varies based on the technology in use). Hence, it has never been easier to monitor the user experience of modern mobile apps.

Jetpack Compose support

Starting with the Android Gradle plugin version 8.263+, Dynatrace OneAgent offers auto-instrumentation for Jetpack Compose UI components.

OneAgent creates user actions based on the UI components that trigger these actions and automatically combines the user action data with other monitoring data, such as web request information and crashes. Currently, to get this feature set activated, developers need to manually enable Jetpack Compose auto-instrumentation. For the Android Gradle plugin version 8.271+, the instrumentation will be enabled by default. At the moment we auto-capture various types of user interactions, including

- Clickables

- Toggleables

- Swipeables

- Sliders

For complete details about Jetpack Compose UI components and user action support, go to User action monitoring for Jetpack Compose documentation.

As mentioned, analyzing user experience and identifying the specific elements with which the user interacts can be more challenging with a declarative UI. This is especially true when it comes to providing proper names and context. With the addition of Jetpack Compose support, we have developed a new method for detecting user action names. Dynatrace now evaluates four properties to generate meaningful user action names. The property value that has the most intuitive meaning is used as the name. A special sensor captures semantic information and evaluates the information from the merged semantics tree. This evaluation occurs in the following order:

- SemanticsPropertyReceiver.dtActionName

- SemanticsPropertyReceiver.contentDescription

- SemanticsPropertyReceiver.text

- Class name

With this approach, companies that use semantic properties appropriately also benefit from a better understanding of user journeys in Dynatrace user sessions.

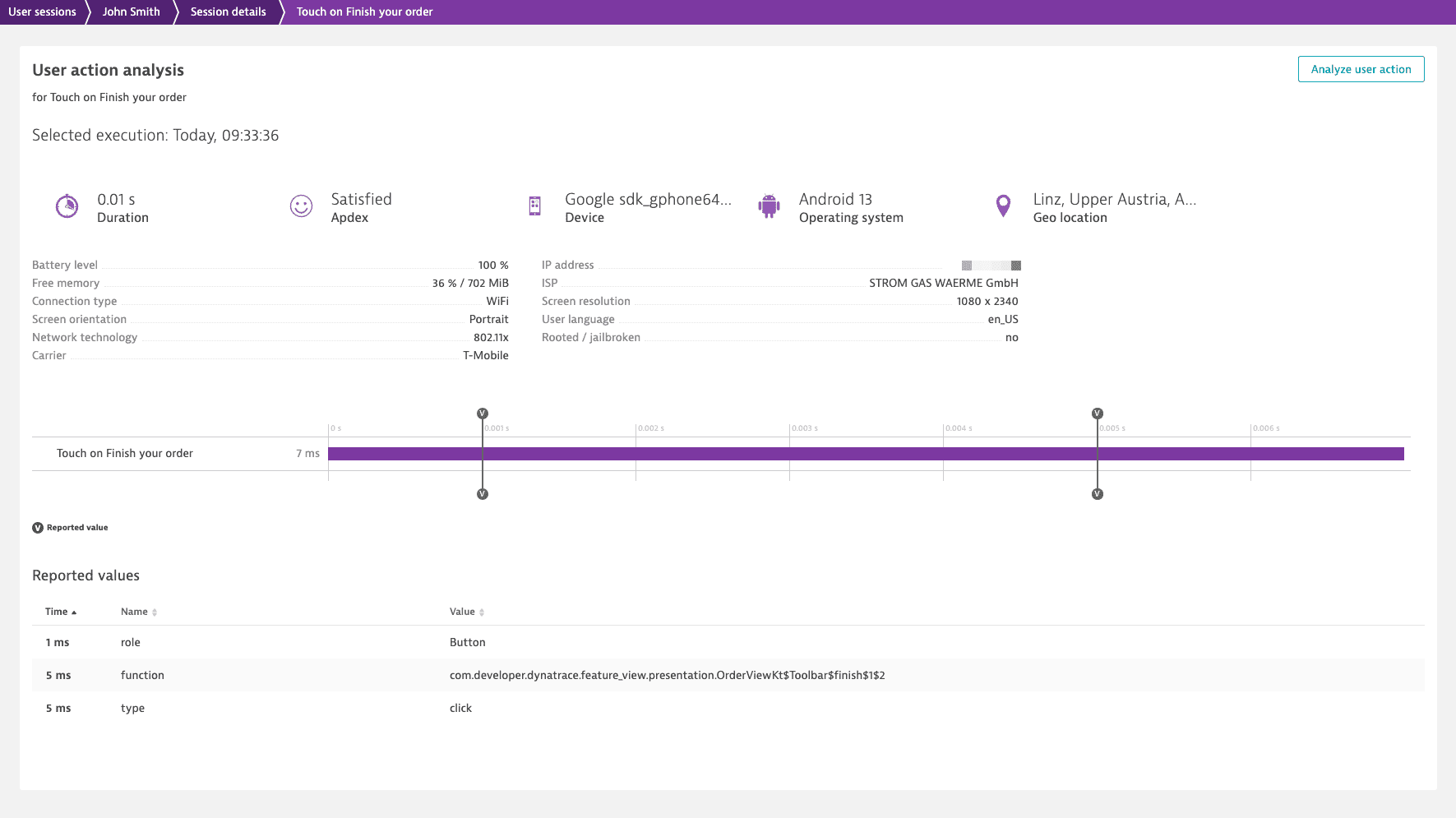

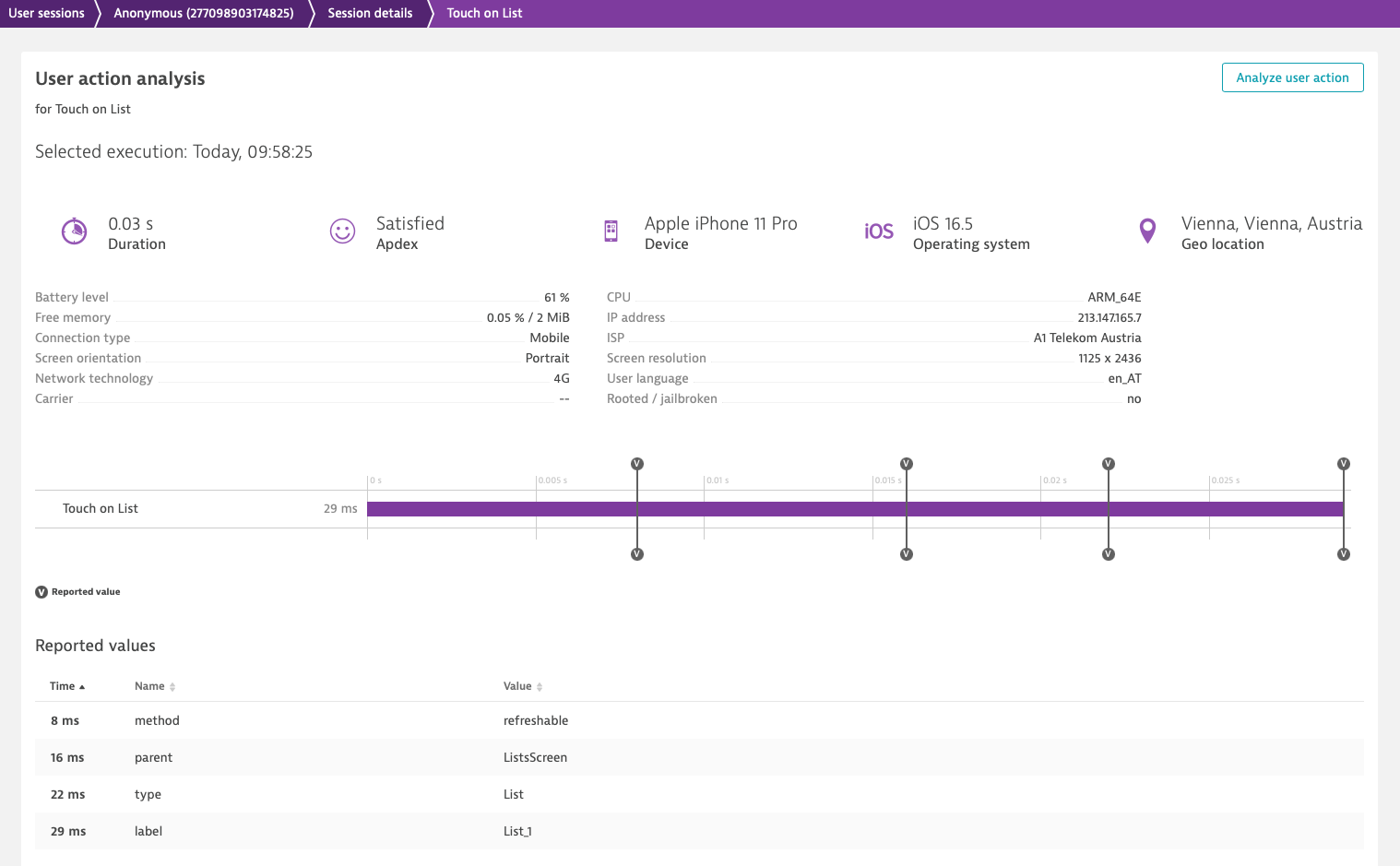

Another key addition that helps make sense of user interactions is the auto-capture of additional metadata based on the UI component used. Metadata is stored as key-value pairs and is accessible through the Dynatrace web UI. With this additional context—for example, location in code, initial and transition states, interaction types, and more—Dynatrace makes sense of the user journey and the technical components in use. So, not only UX but also developers can retrieve the information they need to optimize user experience.

Based on each UI component, Dynatrace also automatically captures relevant metadata, including:

- The function name to make it easier to find the code behind each interaction

- The user action type, to make it easier to understand the user journey in the User session view

- And a state (from/to) to make it easier to understand the evolving state of each UI component

For full details, see Captured component metadata documentation.

SwiftUI support

Initial support for SwiftUI controls was released with OneAgent for iOS version 8.249+. With OneAgent for iOS version 8.265+, the auto-instrumentation capabilities of SwiftUI were significantly expanded to include:

- New controls and views

- SwiftUI methods(for example, onTapGesture)

- Lifecycle monitoring

SwiftUI is a declarative UI framework. Therefore, its instrumentation and monitoring pose additional challenges.

The Dynatrace SwiftUI instrumentor adds additional code to the project source code (*.swift files) during the build process in order to enable the auto-capture of UI elements. After the build process is complete, all changes to the source code are reverted.

Auto-capture support has been expanded. For details regarding which SwiftUI controls and views are supported, see Instrument SwiftUI controls documentation.

At the moment Dynatrace auto-captures various types of user interactions, including

- Buttons

- Pickers

- Sliders

- NavigationLink

- Tabview

In addition, Dynatrace now supports the instrumentation of some SwiftUI methods. When a supported method is closed, Dynatrace collects the method name, the type of view the method was attached to, and the parent view name.

Finally, Dynatrace is now capable of tracking key lifecycle events for SwiftUI views, including:

- Application start

- onAppear (for all SwiftUI views presented from a NavigationLink or a TabView control)

- onAppear (for revisited SwiftUI views presented from a NavigationLink or a TabView control)

.NET MAUI support

Starting with Dynatrace version 1.265+, our dedicated NuGet package helps auto-instrument your .NET MAUI mobile application.

.NET MAUI is a cross-platform framework for creating native mobile apps and more. It’s a combination of open source code and the evolution of Xamarin.Forms that enables developers to use a single code base to cover as much of app logic and UI layout as possible. The repo has been starred by ~19,000 users and receives regular updates and pull requests.

The Dynatrace NuGet package is used to instrument your mobile applications with OneAgent for Android and iOS. The supported feature set is similar to Xamarin.

Auto-instrumentation support is available for:

- User actions

- Lifecycle events

- Web requests

- Crashes

…plus, a wide range of options for manually instrumenting your app.

Conclusion

Regardless of whether you have a Dynatrace SaaS or a Dynatrace Managed deployment, update OneAgent for Mobile today to leverage the latest observability innovations and benefit from immediate visibility and fast value-creation with the unique end-to-end visibility Dynatrace provides!

Looking for answers?

Start a new discussion or ask for help in our Q&A forum.

Go to forum