-

On-demand

Perform 2024 is over, but you can still experience every boundary-breaking mainstage session, product announcement, and breakout session on-demand.

Let’s make waves

Get inspired to shake things up with four days of technical training, industry insights, and networking.

Learn from the cloud’s brightest minds

Learn how to optimize and extend the Dynatrace platform, and hear from experts on the forefront of observability, AI, DevSecOps, and beyond.

On-demand

Experience every boundary-breaking mainstage session, product announcement, and breakout session on-demand.

Four days of LEARNING

Watch keynotes, leadership sessions, customer panels with huge global brands, and deep-dive breakouts.

Mon 1/29

Training & Certifications

Kick off Perform 2024 with instructor-led Hands-on Training (HoT) sessions.

Tues 1/30

Training & Certifications

Learn how to optimize and extend the Dynatrace platform with our experts.

Partner Summit

Connect with Dynatrace partner leaders for exclusive insights and enablement.

Welcome reception

Welcome to the main event! Get to know your fellow peers and tech experts.

Wed 1/31

Mainstage

We’re live with leadership sessions, keynotes, and customer panels.

Breakouts

Learn the latest in cloud observability, AIOps, DevSecOps, and more.

Mainstage

Get inspired by more keynotes, tech demos, and customer sessions.

Expo networking

Network and hobnob with our amazing community of experts and sponsors.

Thurs 2/1

Mainstage

Start the last day of Perform with can’t-miss keynotes and leadership sessions.

Breakouts

Break into groups to learn the trends and best-practices driving the modern cloud.

Mainstage

Join us on Mainstage one last time for more huge keynotes and customer awards.

Closing event

Celebrate another awesome Perform alongside peers, customers, and experts.

See who’s making waves

Hear transformation insights, real-world challenges, and inspiring stories from the experts and disruptors pushing the limits of what’s possible in the cloud.

Rick McConnell

Chief Executive Officer

Dynatrace

Bernd Greifeneder

Chief Technology Officer

Dynatrace

Matthias Dollentz-Scharer

SVP, Chief Customer Officer

Dynatrace

Sandi Larsen

Vice President, Global Security Solutions

Dynatrace

Mark Tomlinson

Director of Observability and Performance

FreedomPay

Brian Rutherford

Vice President of Software Development

U-Haul International

Andi Grabner

DevSecOps Activist

Dynatrace

Stefano Doni

CTO

Akamas

Debbie Umbach

VP, Corporate Marketing

Dynatrace

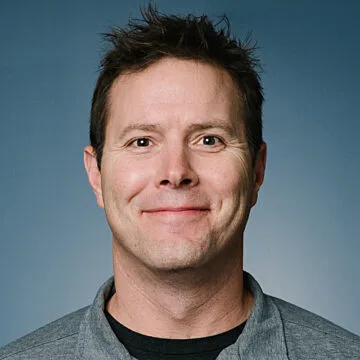

Steve Tack

SVP, Product Management

Dynatrace

Watch On-demand

On-demand

- Inspiring keynotes from tech leaders and gamechangers

- Mainstage sessions with Dynatrace leaders and customers

- Free access to all on-demand content