Distribution and communication between applications and services is a central concept in modern application architectures. In order to profit from distribution you have to keep some basic principles in mind – otherwise you can easily run into performance and scalability problems. During development these problems often do not surface. Then suddenly in load testing or production you might then realize that your chosen software architecture does not support the required performance and scalability requirements. In this post we will look at major points to keep in mind when building distributed applications.

Distribution requires interaction between applications. This ranges from simple point-to-point interaction over large-scale cluster architectures to dynamic service-oriented/service-based architectures. Communication across system boundaries is also key to enable software systems to scale and improve availability. Modern software architectures embrace distribution as a central necessary concept. The Java platform plays a central role due to its large distribution and the good API and product support. The scenarios in which distributed systems are used manifold and range from legacy system integration (like CICS or IMS) over the integration of standard software like SAP, to the integration of internal or external services. SOA propagates this approach to enable reusability and flexibility of services and applications to react faster to new market requirements. Additionally, trends like Grid Computing, Virtualisation and blade system with high numbers of CPUs/cores result in more and more clustered applications. The main drivers for this are scalability as well as improving availability. Trends like cloud computing show that distributed service platforms will become even more popular in the future. Additionally systems are getting more dynamic to increase flexibility – for example, application nodes which are added at runtime.

These trends also lead to architectures becoming more and more complex. For developers it gets even more difficult to understand the implications of a service call in production. This complexity and lack of knowledge easily leads to increased resource consumption (CPU, memory, network) and degrading performance.

The devil in disguise

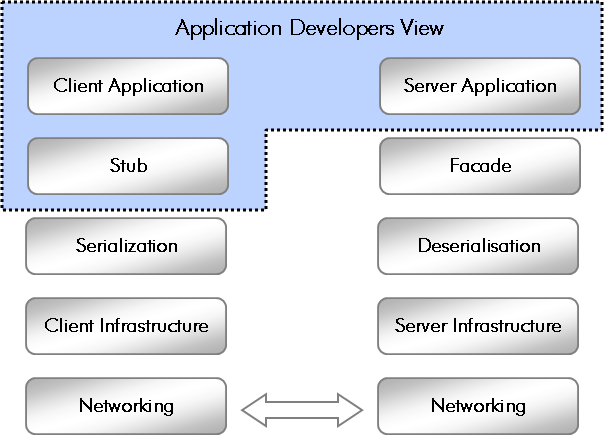

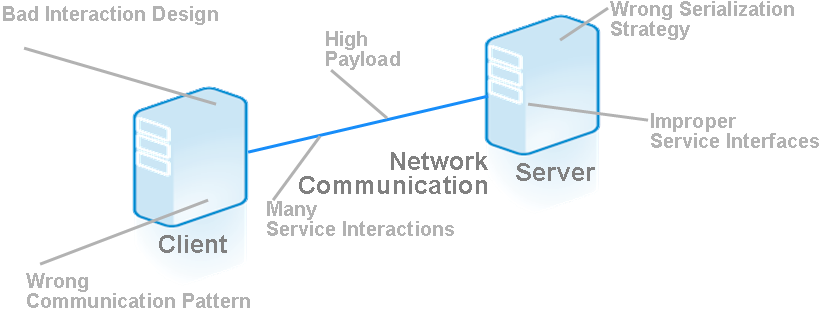

Modern remoting technologies made the implementation of distributed applications much easier. Details of the underlying communication as well as the server- and client-side infrastructure are often transparent to the developer. Today, exposing a Java class as a service in many cases can be achieved by simply adding a single annotation to a class. Services can also be easily accessed by tool-generated proxies. As the figure below shows, however, this is only the top of the iceberg.

The core building block of remoting stacks is the serialization of the object representation and the transport format. Normally the application developer does not need to care about this point. However this is the point from which a lot of performance problems originate. Inefficient serialisation means that more data is sent over the network than necessary. Complex object representations and large amounts of data result in high CPU and memory usage during serialization and deserialization.

The underlying infrastructure and its configuration have a significant impact on the performance of an application. On the client side the major aspects are the connection management and the underlying threading model. The guideline for using connection in distributed applications is similar to database connections. The establishment of a connection requires significant time. This however also depends on the protocol. Establishing a HTTPS connection for example is more costly than a simple TCP/IP connection. At the same time connections are a valuable system resource. Therefore the usage of connection pools is central. The right configuration is key here, as a wrong configuration can bring more harm than benefit.

The threading model relates to how requests are processed. A central aspect is whether requests are processed synchronously or asynchronously. Synchronous communication blocks a thread until the response is received. In asynchronous communication a callback is invoked when the response is received. This allows the thread to be used by other transactions. On the server side the number of available worker threads is major configuration item to look at as they define the maximum number of parallel-processed service requests.

Last but not least the network itself is a central component in distributed applications. The network is a highly critical bottleneck resource which limits scalability even more than impacting performance. This area is often ignored during development as no real networking is involved.

The beauty of remoting technologies is …

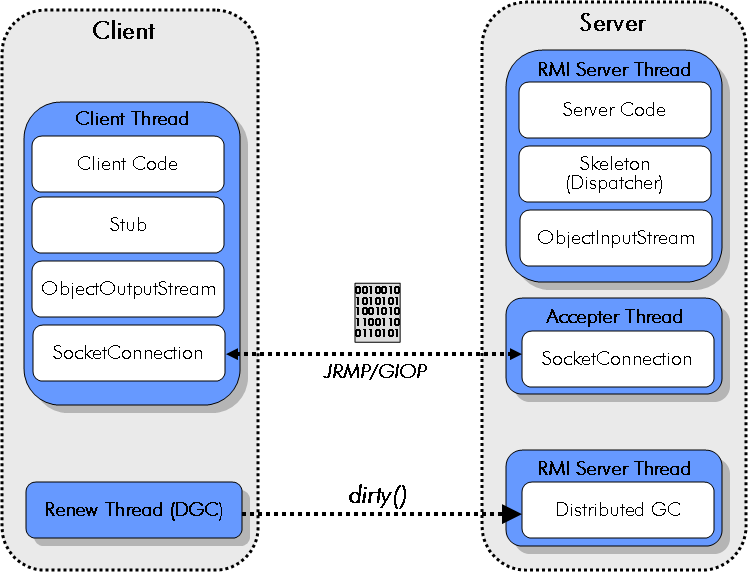

… that there are some many to choose from ;-). Java offers a huge variety of possibilities and technologies to implement distributed applications. The selection of a remoting technology already significantly influences the architecture and also performance and scalability of an application. The “oldest” and assumedly most widely used remoting protocol is RMI (see figure below). RMI is the standard protocol for J EE applications. As the name already implies is designed for invoking methods of objects hosted in other JVMs. Objects are exposed at the server side and can then be invoked from clients via proxies. The same server object is used by multiple threads. The thread pool is managed by the RMI infrastructure. Communication is handled via TCP/IP and the protocol used is JRMP or for RMI over IIOP GIOP (the CORBA protocol). Application server vendors also offer their own proprietary protocols which are optimized for performance.

As references to the server side have to be managed as well, the RMI infrastructure also provides a specific garbage collector to manage remote references. The distributed garbage collector (DGC) itself makes use of the RMI protocol for managing the server-side objects’ lifecycle. Besides the strong coupling of the client and the server RMI comes with a number of further implications. RMI only supports synchronous communication with all the disadvantages discussed above. Additionally, no lower level caching can be used for data-driven services as it is based on a binary protocol. Developer and system architects can influence the configuration parameters of the infrastructure to optimize performance.

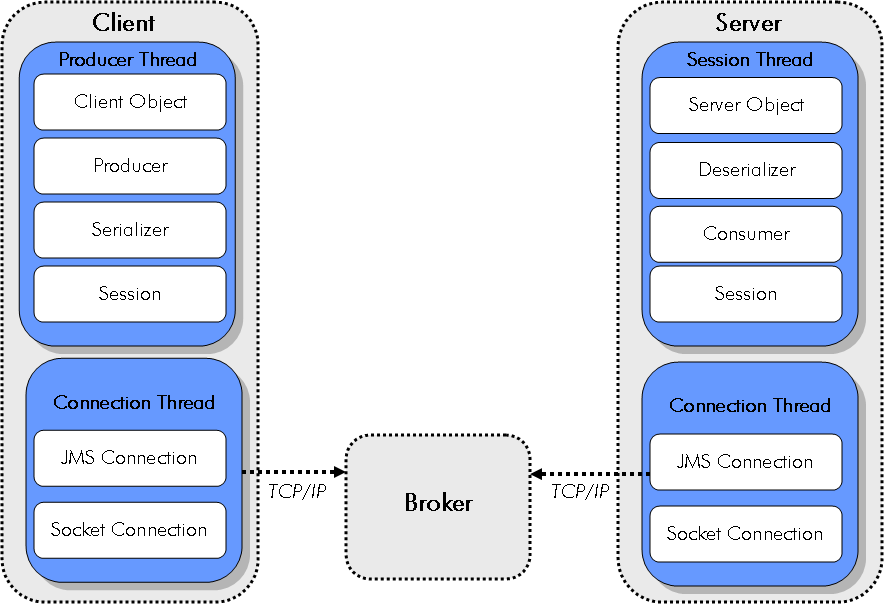

JMS (Java Messaging Service) is the second widely-used protocol in the J EE space (see figure below). In contract to RMI, JMS is an asynchronous protocol. The communication is based on queues or topics where listeners are used to react on messages. JMS is not a classical remote procedure call protocol but still fits well for service-to-service interactions. In many ESB implementations – which often act as the central point in SOAs – a JMS-based middleware is used to exchange messages between services. Due to its asynchronicity, the typical problems of synchronous processing can be avoided. In many systems a central aspect for scalability is to free up resources (like threads) very fast. Asynchronous processing approaches are in many cases the only suitable way to do that.

JMS offers a number of different transport formats. XML is the most commonly-used message format, but binary formats would also be possible. The design of the message structure must be a central part in the application architecture as it directly influences performance and scalability.

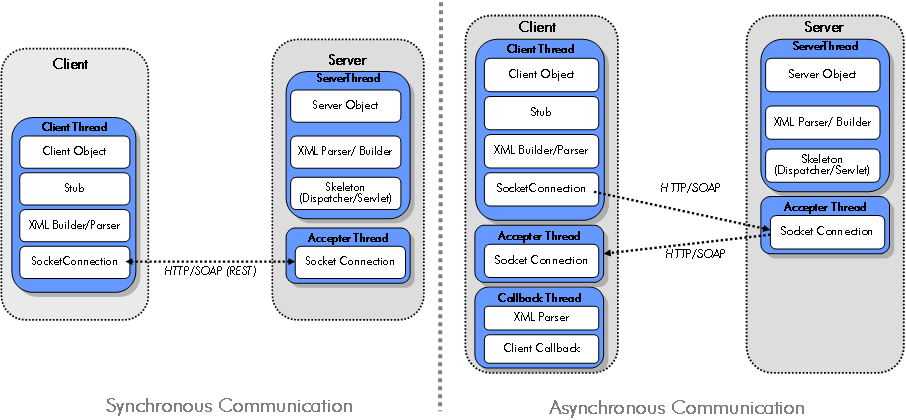

Web Services via SOAP (see figure below) and the related WS -*are continuously gaining importance in the Java enterprise space. SOAP was designed to provide an alternative to CORBA and had strong industry support from the beginning. Because of the interoperability effort around WS-I it is possible to (more or less) easily connect different platforms to each other. SOAP is an XML-based RPC protocol which is often referred to as consuming lots of bandwidth.

More and more often REST-based services come into play as an alternative to SOAP. REST Services in Java are specific by JSR 311 and are based on the basic operation support by HTTP. REST however is not designed to be used as an RPC protocol. It is rather resource oriented and designed for access and manipulation of (web) resources. Both protocols support synchronous communication. This is also mandated by the underlying HTTP protocol. The WS-Addressing extension for SOAP however also allows the implementation of asynchronous services. A big advantage of REST is the ability to easily implement caching by using HTTP proxies. REST is relying on the mechanisms which are anyway available via the underlying HTTP protocol.

What can go wrong

Potential problems can arise at various points in a distributed application – as shown in the figure below. On the client side the major problem areas are bad interaction design- meaning too many service calls – or choosing the wrong communication pattern. Long running synchronous transactions can easily lead to performance problems. On the communication level high network load caused by high amounts of data and a high number of service invocations are the main problems. On the server side inadequately designed service interfaces and the usage of improper serialisation strategies leads to performance and scalability problems. We will now take a close look at those problem areas.

Anti Pattern: Wrong Protocol

The choice of the right communication protocol strongly depends on the overall system architecture as well as the underlying requirements. If you are working in a heterogeneous environment where mainframe, Java and .NET components have to interact with each other there is nearly no way around SOAP today. In a pure Java environment, the usage of RMI over JRMP is still the best performing and most scalable solution which you can get with out-of-the-box programming support. In many SOA implementations, however, SOA is synonymously used for a Web-Service-based implementation. So there are more and more cases where plain Java applications are using SOAP as an RPC protocol although there are no advantages in following this approach. Measurements have shown that the overhead of SOAP compared to RMI-JRMP is significant. Performance degradation by a factor of ten and significantly higher CPU and memory consumption occur quite often.

Besides the described standard protocols a number other XML-based and binary protocols are used in applications. Hessian is a well-performing alternative. Additionally there exist implementations for other programming languages. In case Spring is used to export POJOs for remote invocation it is relatively easy to switch between different protocols without having to change the implementation. Spring supports RMI, HTTP, Hession, Burlap, JAX-RPC, JAX-WS and JMS.

Anti Pattern: Chatty Application

One of the core principles when building distributed applications is to make as few remote calls as possible. They always imply the serialization of data overhead, overhead by connection establishments and imply additional load on the network. Additionally, the resource consumption on CPU, memory and the network restricts scalability. Therefore it is essential to design the interface of remote applications in a way to ensure only a minimum number of service interactions is necessary. Especially applications which have originally been built to be used locally and are then distributed for scalability reasons suffer from a high number of service interactions. These problems in most cases surface in load testing or production – during local testing in development, everything seems to be working fine. By using proper performance management approaches remote behavior can already be analyzed during development to avoid these problems.

Based on this analysis, interfaces can be refactored and the application logic can be redesigned to reduce the number of remote calls. Possible approaches are to combine the logic of several methods or the usage of data container where before several calls were required for passing around objects. The creation of data locality also helps to reduce remote calls as data is available where it is needed. Especially when data is read, the usage of caches can massively improve performance and scalability. It is essential to already consider service distribution and the implied communication early in software design if it is or will become a requirement.

Anti Pattern: Big Messages

When invoking remote services this always implies data to be exchanged via different protocols. In case of SOAP this is XML or in case of RMI it is binary data. With most technologies, data of objects or the objects themselves are exchanged. This serialization is happening in most cases “under the hood” deep in the remoting implementation. The overhead of serialization is proportional to the size of the transferred objects.

In one real world case we ran into serialization overhead of 98 percent. How come? The interface of an authentication services required a user object for authorization. This object not only contained the required username and password information, but many other fields with references to other data relevant for other use cases. Standard SOAP serialization consequently created messages with multiple kilobytes of data. This data had to be parsed by the service and mapped to the user object structure, resulting in massive CPU and memory usage. The solution was more than obvious. The interface got refactored to only require the user ID and the password. So besides choosing the right remoting technology, message content design is essential to build well performing and scalable applications. Very often the nice generic object that fits perfectly into the design comes with a high-performance penalty.

Anti Pattern: Distributed Deployment

Distributed J EE often results from the segmentation of an application into services and a number of deployment units which then get deployed within a number of application servers. This distribution has advantages like looser coupling of components which allows that new releases of one deployment package don’t necessarily require the redeployment of other components. Another possibility is to be able to better scale as massively-used services can be deployed on separate hardware or be deployed multiple times.

In complex application landscapes with a high number of deployment units, the interaction of services become more and more difficult to understand. This can lead to a situation where two heavily-interacting services are deployed on different hardware resulting in a high number of remote calls. In large-scale applications, it is therefore essential to analyze the frequency of interactions as well as data volume to structure deployment accordingly. In many cases moving from a distributed to a local deployment leads to significant performance improvements without losing any flexibility or scalability options. Especially for stateless services it is a reasonable approach to deploy them on different nodes to improve locality. In a real world example, multiple installations of an insurance system led to enormous resource savings and improved performance. Before the change each request had involved multiple remote calls.

Conclusion

The different anti patterns show that is essential to consider scalability already in the early design phase of an application. It is a key driver for the application architecture – improving performance and scalability later on in many – or even most – cases results in much harder work. A detailed analysis of the application in production is indispensable in order to identify frequent remote calls or large data volumes, and optimize the application accordingly. In case your run across similar or different problems, please let me know, so I can extend my anti-pattern catalog.

Credits

Contents for this article were originally created together with Mirko Novakovic of codecentric for our performance series in the German JavaMagazin

Looking for answers?

Start a new discussion or ask for help in our Q&A forum.

Go to forum