Modern applications—enterprise and consumer—increasingly depend on third-party services to create a fast, seamless, and highly available experience for the end-user. Due to this growing complexity, it has become absolutely critical — and somewhat difficult — for IT staff to make sure these services are running and communicating as intended. As a result, API monitoring has become a must for DevOps teams.

So what is API monitoring? To answer that, it helps to understand what an API is. An application programming interface (API) is a set of definitions and protocols for building and integrating application software that enables your product to communicate with other products and services. APIs each have a web address, known as an API endpoint, and serve as the channel by which a consuming application and API communicate.

What is API Monitoring?

API monitoring is the process of collecting and analyzing data about the performance of an API to identify problems that impact users. If an application is running slowly, you need to first understand the cause before you can correct it. Modern applications use many independent microservices instead of a few large ones, and one poor-performing service can adversely impact the overall performance of an application. In addition, isolating a single poor-performing service among hundreds can be challenging unless proper monitoring is in place. This makes API monitoring and measuring API performance a crucial practice for modern multicloud environments.

What is an API?

An application programming interface (API) serves as a bridge between different software components, allowing them to communicate and exchange data, features, and functionality. Think of it as a set of rules or protocols that enable seamless interaction between software applications. APIs empower developers to create robust, interconnected software systems by providing a standardized way to access functionality across different components.

The need for API monitoring

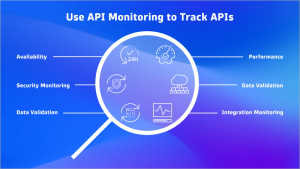

API monitoring captures and analyzes metrics that describe the vital aspects of an application’s performance, which can help developers gain a deeper understanding of the health and efficiency of the APIs they’re utilizing.

To understand the importance of API monitoring, consider a website that provides weather information. That site uses APIs provided by a weather forecasting service. If that API is slow, then Web pages that display weather information will load slowly and may even fail and cause user frustration.

In addition to performance monitoring, your team may want insights into how developers are using APIs, such as which functions are most frequently used. This can provide a better understanding of areas that need improvement or updating. For example, some developers may be using an old version of an API that will soon be deprecated. If this were the case, IT teams would need to plan to migrate to the newest available version of that API.

API monitoring is especially important when using third-party components, such as payment services, customer relationship management services like Salesforce, and internal services provided by other teams within an organization for specialized internal processes. The growing popularity of these highly modular third-party services drives the need for better monitoring solutions.

In addition, API monitoring can provide information on the extent to which business functions depend on APIs as well as the impact of using those APIs on business objectives and key performance indicators (KPIs).

Benefits of API monitoring

- Early detection of issues

APIs are the backbone of modern applications, enabling seamless communication between services. However, they can be prone to latency, errors, or unexpected behavior. API monitoring allows you to detect these problems early, preventing them from affecting end users. You can proactively address issues before they escalate by monitoring key metrics like response time, error rates, and throughput.

- Performance optimization

Monitoring APIs provides insights into their performance. You can track response times, identify bottlenecks, and optimize resource usage. For example:

Response time: Monitoring helps you ensure that APIs respond within acceptable time limits. Slow APIs can impact user experience and overall system performance.

Throughput: Monitoring throughput helps you understand how many requests your APIs can handle. It guides capacity planning and scaling decisions.

Error rates: High error rates indicate issues that need immediate attention. Monitoring helps you pinpoint the root cause and fix it promptly.

- Security and compliance

APIs are vulnerable to security threats such as unauthorized access, injection attacks, or data leaks. Monitoring helps you:

Detect anomalies: Monitor for unusual patterns in API traffic, which could indicate security breaches.

Rate limiting: Enforce rate limits to prevent abuse or DoS attacks.

Compliance: Ensure APIs adhere to security standards (for example, OAuth, JWT) and regulatory requirements (for example, GDPR).

- Dependency management

Modern applications rely on internal and external APIs. Monitoring helps you manage dependencies effectively. For example:

Dependency health: Monitor third-party APIs to ensure they’re available and performing well.

Version compatibility: Track API versions and deprecations to avoid breaking changes.

- Business insights

API monitoring provides valuable business insights, such as:

Usage patterns: Understand which APIs are heavily used and which are underutilized.

Billing and cost optimization: Monitor API usage to optimize costs and prevent unexpected bills.

- SLA adherence

Service-level agreements (SLAs) define acceptable performance levels for APIs. Monitoring helps you track adherence to SLAs and take corrective actions if needed.

- Alerting and incident response

Set up alerts based on predefined thresholds (for example, high error rates and prolonged response times). When anomalies occur, receive notifications and take immediate action.

Challenges of API monitoring

Diverse ecosystems: Modern applications rely on a mix of internal and external APIs, which might be built using different technologies, protocols, and standards. Monitoring this diverse ecosystem requires tools that handle various formats and communication patterns.

Dynamic environments: APIs operate in dynamic environments where services scale up or down based on demand. Monitoring tools must adapt to these changes and provide real-time insights without causing performance overhead.

Latency and performance: Monitoring APIs introduces additional latency. Balancing the need for detailed monitoring with minimal impact on API performance is challenging. Tools must collect relevant metrics efficiently.

Rate limiting and throttling: APIs often impose rate limits to prevent abuse. Monitoring tools must account for these limits and avoid exceeding them during monitoring. Otherwise, they risk disrupting the very APIs they’re monitoring.

Security and authentication: Monitoring APIs requires proper authentication and authorization. Tools must handle API keys, tokens, and security protocols (for example, OAuth) to access protected endpoints.

Data volume and retention: APIs generate substantial data, including request/response payloads, logs, and metrics. Managing this data volume efficiently while retaining historical information for analysis is challenging.

Complex dependencies: APIs interact with other services, databases, and third-party APIs. Monitoring tools must trace these dependencies to identify bottlenecks or failures accurately.

Error handling and alerts: Monitoring tools should detect anomalies, errors, and performance degradation. Configuring meaningful alerts and integrating them with incident response workflows is crucial.

Versioning and compatibility: APIs evolve. Monitoring tools must handle version changes, deprecations, and backward compatibility. Tracking which versions are in use and when to migrate is essential.

Multi-protocol support: APIs use various protocols (REST, SOAP, GraphQL, etc.). Monitoring tools must understand and interpret these protocols to extract relevant information.

Geographical distribution: APIs may be distributed across multiple regions or data centers. Monitoring tools must account for latency variations and regional differences.

Business context: Monitoring isn’t just about technical metrics; it’s about understanding the business impact. Tools should correlate API performance with user experience, revenue, and business goals.

Ways to monitor APIs

When monitoring, it’s important to track metrics that describe the vital aspects of an application’s performance. When it comes to APIs, some important metrics include the number of calls to API functions, the time to respond to API function calls, and the amount of data returned.

There are two predominant methods of API monitoring: synthetic monitoring and real user monitoring (RUM).

Synthetic monitoring is an application performance monitoring practice that emulates the paths users might take when engaging with an application. Synthetic monitoring can automatically keep tabs on application uptime and tell you how your application responds to typical user behavior, and it uses scripts to generate simulated user behavior for various scenarios, geographic locations, device types, and other variables. Once this data has been collected and analyzed, a synthetic monitoring solution can give you crucial insights into how well your app is performing.

Similarly, RUM provides valuable insights into an application’s usability and performance, but it does this by observing the actual experiences of end-users. RUM is more than a simple data sample. It captures every aspect of a customer’s interaction or transaction, allowing developers to visualize their entire journey within the app and resolve issues quickly with real-time data. This monitoring method provides full-stack observability for the end-user experience, eliminating blindspots that impact business performance and allowing for wise decision-making across development.

While synthetic monitoring and RUM gather their insights in different ways, they both facilitate a smoother application development process and end-user experience. When used together for API monitoring, they can provide more complete visibility of your API performance.

API testing complements monitoring

While API monitoring helps you understand the performance of APIs in a production environment, you’ll also want to be aware of any problems associated with your APIs before the application is released. This is done through testing.

Using agile methodologies, developers are constantly updating code and integrating it into production services. This practice comes with the risk of introducing new bugs, so it’s important developers test code before it’s released to production – something developers routinely do for the code they develop (a practice that should also be done for third-party APIs). If there’s a problem during testing, developers can quickly identify the root cause by looking at the differences in code between the last stable release and the release that produced the issue.

Both testing and monitoring play vital roles in the development of quality applications. Testing helps prevent bugs from being released into production code while monitoring helps to identify failures or performance issues when code is running in production.

Choosing an API monitoring tool

When choosing an API monitoring tool, keep in mind that not all have the same breadth of functionality or depth of analytic capabilities. Look for key features, including:

- Comprehensive analysis of all data, not just samples of monitoring data

- Support for both RUM and synthetic monitoring

- Ability to identify third party APIs that are adversely affecting application performance

The best way to understand how your customers are using and experiencing APIs is to collect data about and track every user-API interaction — automatically. If you were interested in aggregate measures, such as the average response time to an API function call, then missing a small number of outliers in a large sample may not materially impact the quality of the results. However, if you want to trigger an alert based on an outlier, such as a sudden spike in latency in one region or for a single customer, then sampling may not provide the alerting system with the data it needs to perform its job.

RUM, with comprehensive, real-time data collection and analysis, not sampling, provides a view into what customers are experiencing. This is needed to identify and remediate failures and slowdowns as soon as they occur. Synthetic monitoring is helpful when developing a baseline of performance. For example, API calls in one geographic region may be consistently slower than calls in another region. This may be due to the implementation choices of the API provider and not something that will likely change in the near future. In that case, you can plan accordingly and limit the use of API services in that region or adjust your alerting thresholds to account for the longer latency in regions with poorer performance.

With its AI-to-everything approach, the Dynatrace Software Intelligence Platform provides API monitoring capabilities, including multi-request HTTP monitors and detection of impacting third-party API calls with RUM. This approach delivers a comprehensive view into the state of your API usage that’s essential to ensure the high availability and consistent performance your customers expect.

To learn more about performance monitoring in your organization’s hybrid multicloud, check out our on-demand webinar Network & infrastructure performance monitoring of your hybrid multi-cloud, and begin your journey to full-environment observability today.

Looking for answers?

Start a new discussion or ask for help in our Q&A forum.

Go to forum