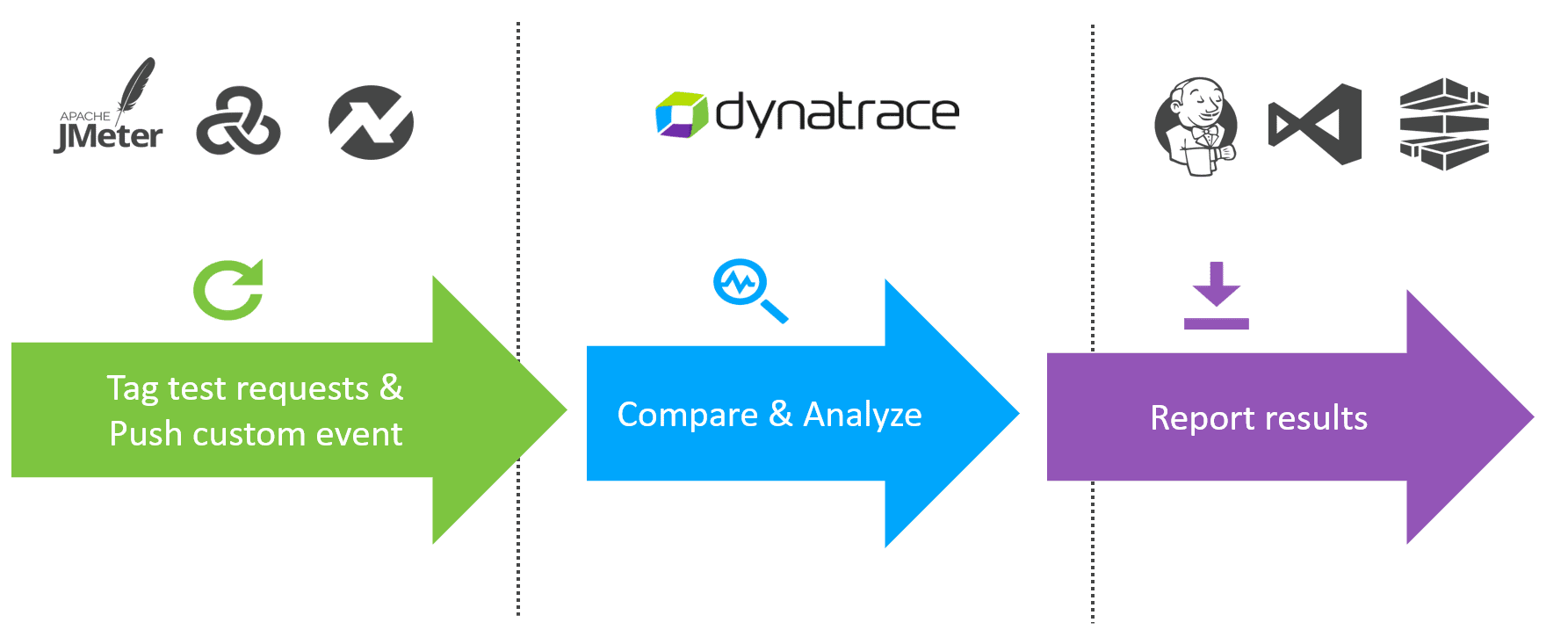

Dynatrace and load testing tools integration

By integrating Dynatrace into your existing load testing process, you can stop broken builds in your delivery pipeline earlier.

Available integrations

Dynatrace offers several out-of-the-box integrations with test automation frameworks.

Test automation involves the use of special software (separate from the software being tested) to control the execution of tests and the comparison of actual outcomes with predicted outcomes. Test automation can automate some repetitive tasks in a formalized testing process already in place or perform additional testing that would otherwise be difficult to do manually. Test automation is important for continuous delivery and continuous testing.

Tag test requests and push custom events

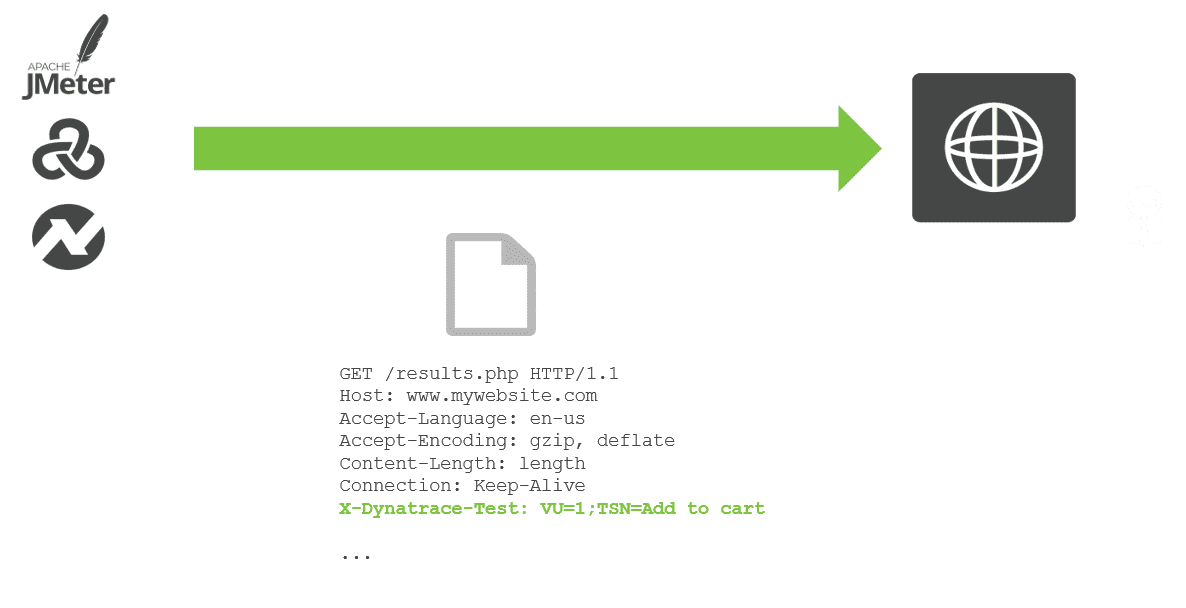

Tag tests with HTTP headers

While executing a load test from your load testing tool of choice (JMeter, Neotys, LoadRunner, etc) each simulated HTTP request can be tagged with additional HTTP headers that contain test-transaction information (for example, script name, test step name, and virtual user ID). Dynatrace can analyze incoming HTTP headers and extract such contextual information from the header values and tag the captured requests with request attributes. Request attributes enable you to filter your monitoring data based on defined tags.

You can use any (or multiple) HTTP headers or HTTP parameters to pass context information. The extraction rules can be configured via Settings > Server-side service monitoring > Request attributes.

The header x-dynatrace-test is used in the following examples with the following set of key/value pairs for the header:

VU | Virtual User ID of the unique user who sent the request. |

SI | Source ID identifies the product that triggered the request (JMeter, LoadRunner, Neotys, or other). |

TSN | Test Step Name is a logical test step within your load testing script (for example, |

LSN | Load Script Name - name of the load testing script. This groups a set of test steps that make up a multistep transaction (for example, an online purchase). |

LTN | The Load Test Name uniquely identifies a test execution (for example, |

PC | Page Context provides information about the document that is loaded in the currently processed page. |

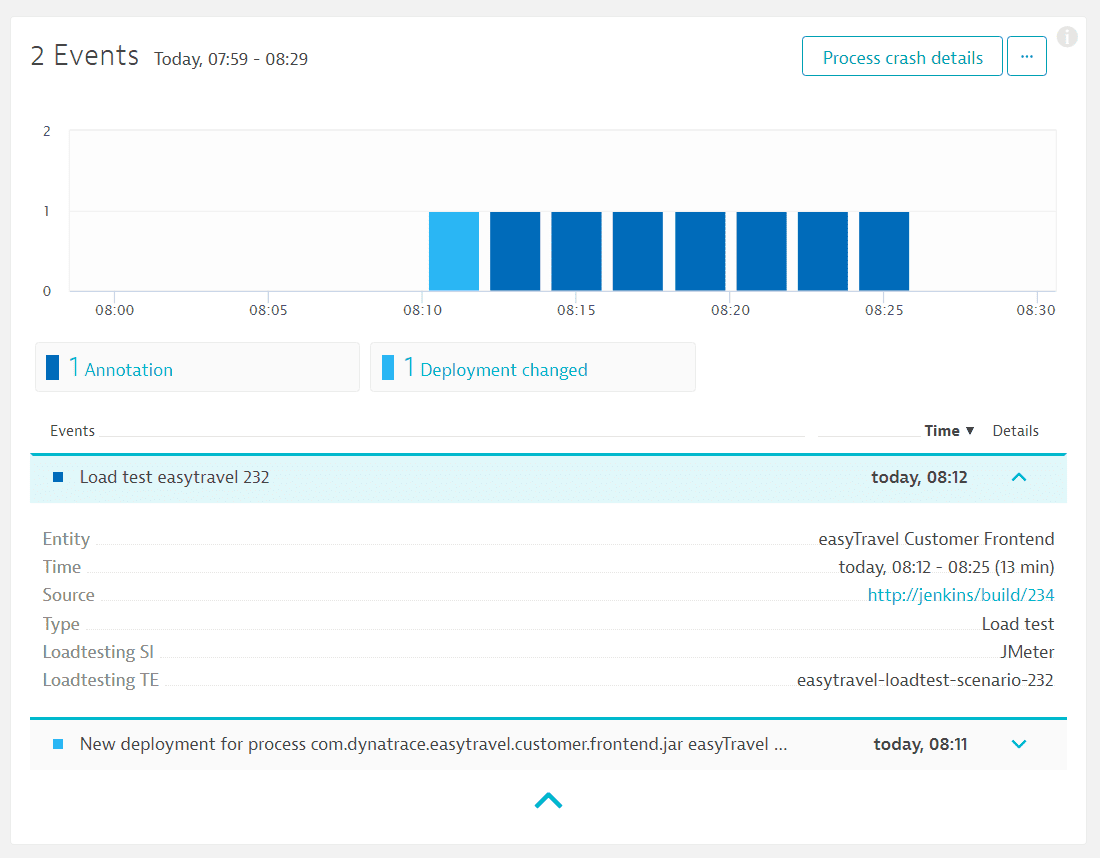

Push custom events

When running a load test, you can push additional context information to Dynatrace using the custom event API. A custom annotation then appears in the Events section on all overview pages of the entities that are defined in the API call (see example below).

Load test events are also displayed on associated services pages (see example below).

Push load testing metrics to Dynatrace

You can also push specific metrics from your load testing tool (throughput, user load, etc.) to Dynatrace via the custom metrics API.

For JMeter, there is a new open-source plugin you can use to push the metrics directly to Dynatrace via the Metrics API.

Compare & analyze

There are different ways to analyze the data. Your approach should be based on the type of performance analysis you want to do (for example, crashes, resource and performance hotspots, or scalability issues). Following is an overview of some useful approaches you can use to analyze your load tests. Of course, any Dynatrace analysis and diagnostic function can be used as well.

Filter

-

Via request attributes

Once you've tagged requests with relevant HTTP headers, you can use the defined request attributes to filter your monitoring data based on the request attributes you've defined. -

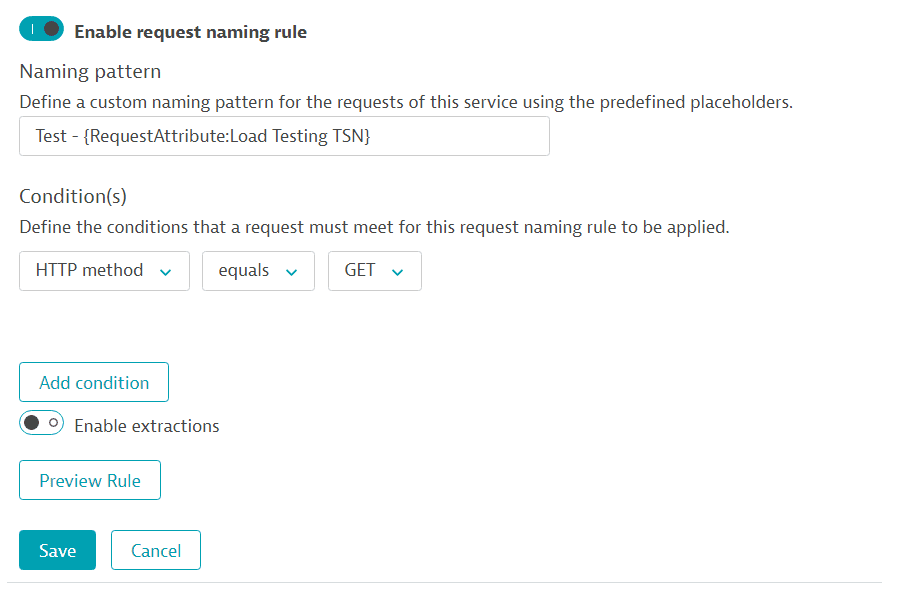

Via request naming rules

You can define a web-request naming rule based on request attributes to easily access the monitoring data of the load tests. For example, you can define a naming rule on the test step name (such asTSNin our example below).

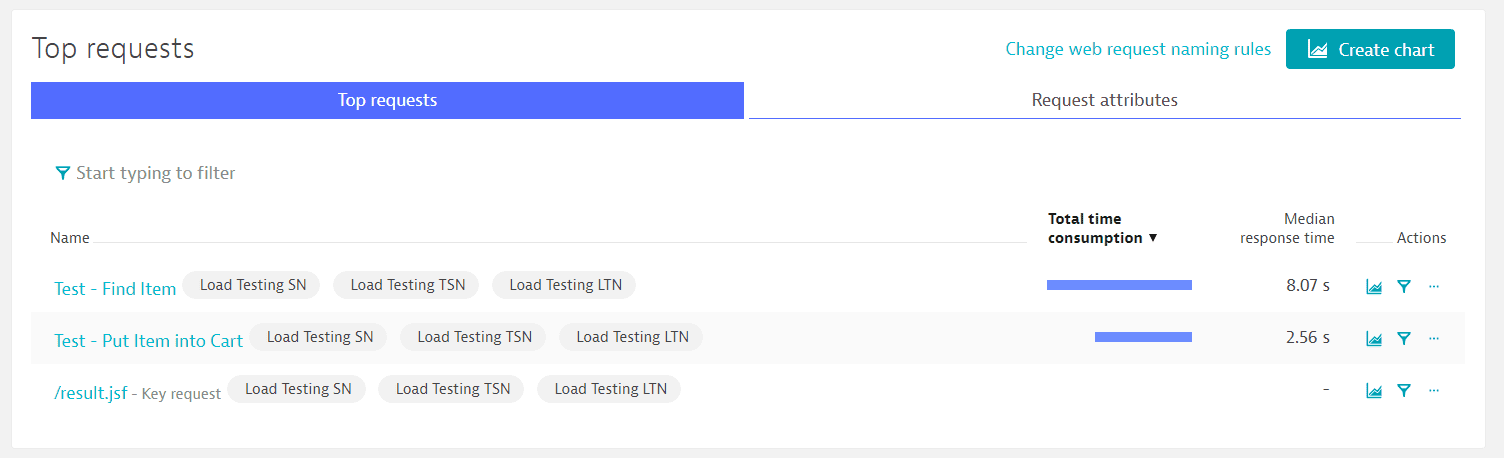

As a result, this rule creates a separate trackable request for each test step. Because request naming rules produce distinct service requests, each request is independently baselined and monitored for performance anomalies.

-

Via key requests

If you've marked requests as key requests, these requests will be separately accessible via the Dynatrace Metrics API v1 endpoint.

Multidimensional analysis

You can use data captured via request attributes to build your own multidimensional analysis. Multidimensional analysis views are useful for monitoring the evolution of your load tests overtime.

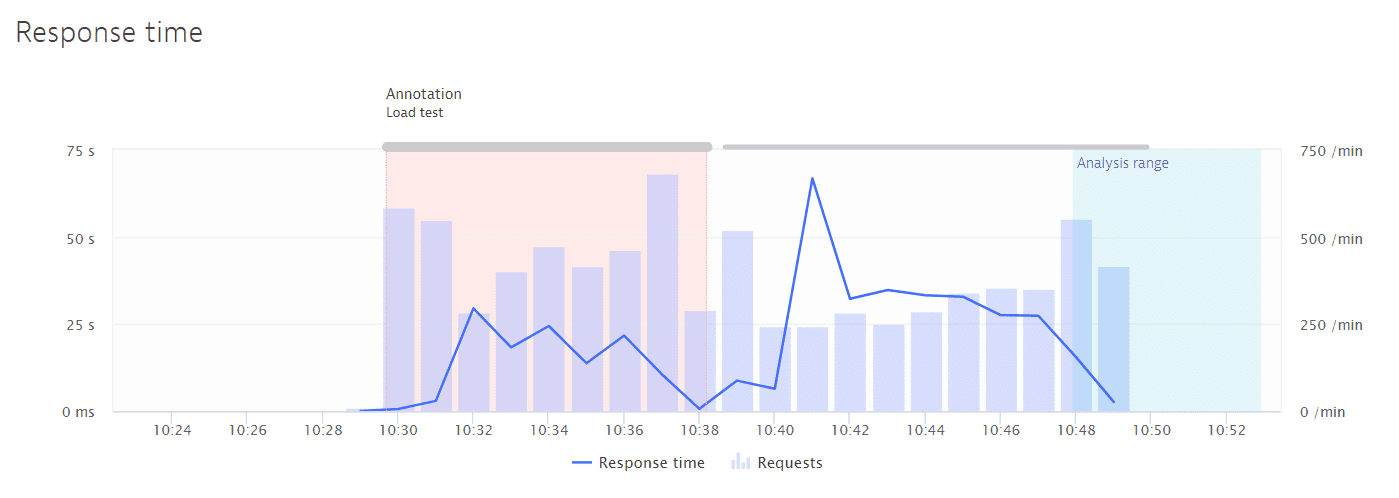

Comparison

-

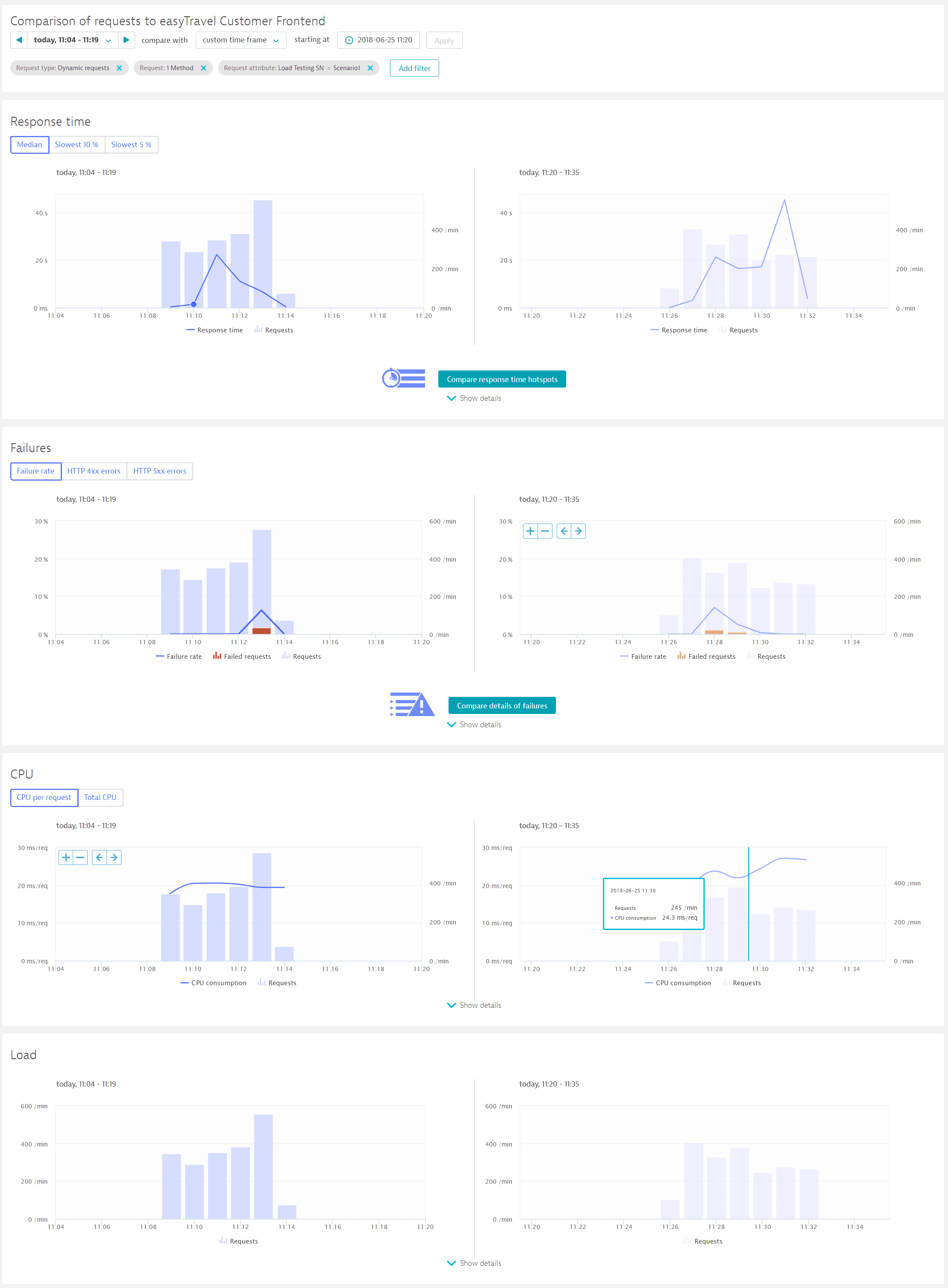

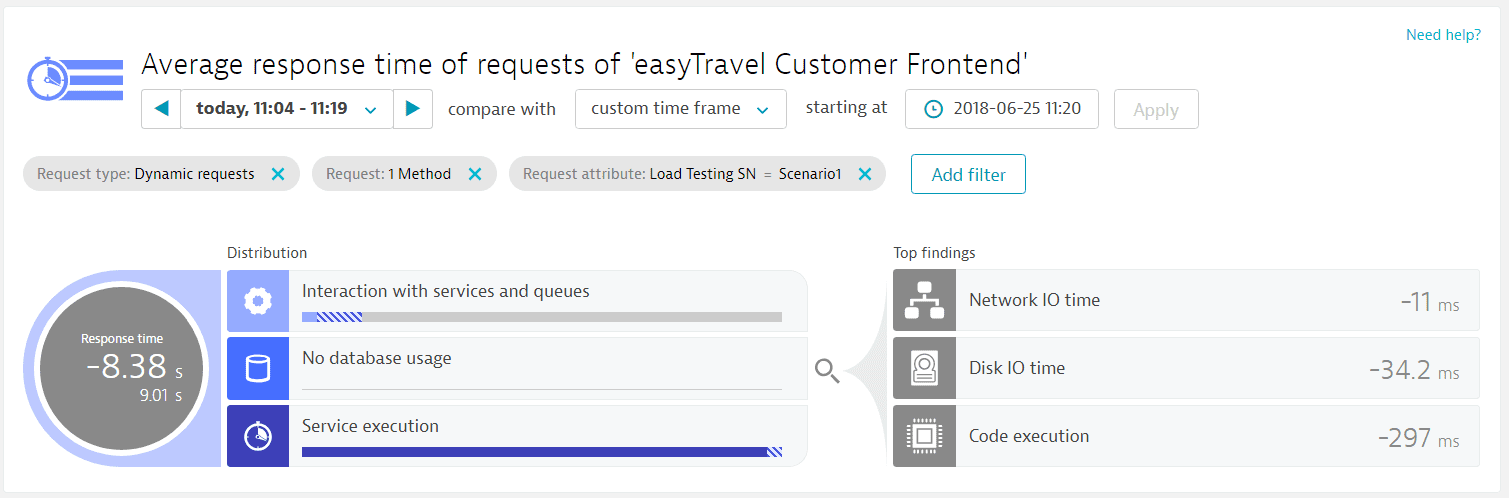

Compare view enables you to compare critical service-request metrics (Response time, Failures, CPU, and Load) between two load tests.

-

Response time analysis and Failure analysis views can be used to better understand performance changes in detail.fail

Diagnostics

The top web requests diagnostic tool can be used to analyze the top web requests across all services. Use the request attributes you've defined to filter the load test requests.

Report results

The Metrics API v1 enables you to pull data for specific entities (processes, services, service methods, etc) and feed it into the tools that you use to determine when a build pipeline should be stopped.

The Problem API delivers metrics and details about problems that Dynatrace detects during load tests.

Additional considerations

Maintenance windows

If you run your load test in a production environment and don't want to negatively influence your overall service and application baselines, it's a good idea to define your maintenance windows before performing any load testing. Using maintenance windows during load testing ensures that any load spikes, longer-than-usual response times, or increased error rates won’t negatively influence your overall baselining.

Alternatively, if you have a dedicated load testing environment and want to leverage the problem detection during load tests, you shouldn't use maintenance windows during load test execution.