I wanted to share a story that most of the non-technical among us, can relate to – website goes down, must be the hosting provider. This post is an account of a conversation, and subsequent performance testing I did for a friend who had a website outage, where I was able to point out that her hosting provider was not to blame for her website issues.

I believe this serves as a perfect example of why marketers, designers, and some developers should be more considerate of the complexities within a website, and not be quick to play the blame game.

Originally it appeared in MarketingMag.com.au but has been reworked for this audience.

It begins with a phone call…warning it contained profanities

I got a phone call yesterday from an old colleague of mine who was having some major website performance issues which was impacting user experience. She is now working as a digital marketing manager at a global employment resourcing firm and to say she was frantic is an understatement.

The conversation went like this:

“Dave I need help. Our website has been hacked continuously, it’s gone down twice and both times for extended periods. It’s a major cluster [obscenity] because our contractors can’t submit time sheets, so everything is being done manually and everyone is pointing fingers at me!”

She then went on to dump the hosting provider in it…

“Our hosting provider is rubbish. Can you recommend a good one? The site is constantly down and it’s slow”

Right. Website is down and it’s slow. Must be the host. Easy to assume, but not necessarily correct.

How about we performance test the site and diagnose the issue?

My suggestion, rather than simply ship the same problems over to another provider, was to performance test the site using Dynatrace Synthetic Monitoring

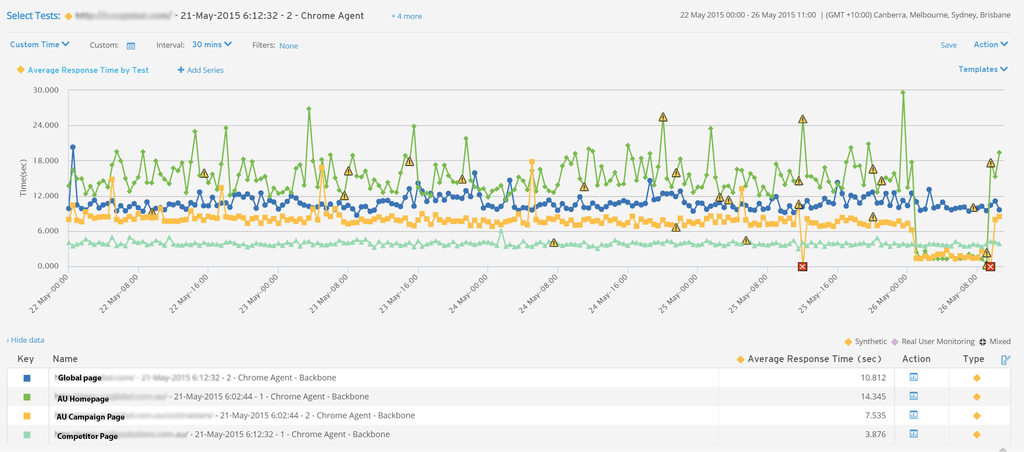

I set up a few basic tests, from backbone locations in Melbourne and Sydney, using Chrome. Specifically I was looking for:

- Response time and availability

- Variance in performance (i.e is response time fluctuating or is it consistent?), and

- If there is an issue, what is causing the issue? (i.e. a third party host plugin, image sizes, failing objects).

Specifically, I looked at the following:

- Homepage

- Another landing page (i wanted to understand whether it was a site-wide issue or an isolated page issue),

- Competitor homepage (it’s important to benchmark your site continually, irrespective of whether you’re having problems with your website performance or not), and

- Their global site (this is another way to benchmark performance against a relevant metric).

In the space of 12 hours I was able to see the problem pretty clearly:

- Homepage response time was averaging 16 seconds. (Ouch.)

- A major outage occurred at 10pm for at least an hour.

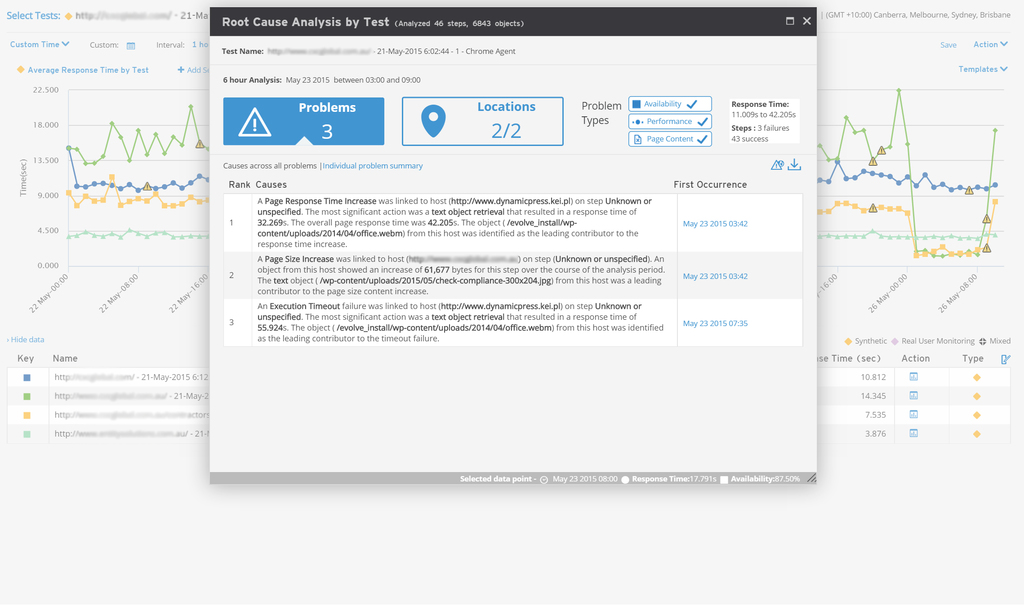

So what’s causing the issues?

The performance issues were stemming from a 3rd party plugin(s) not the host.

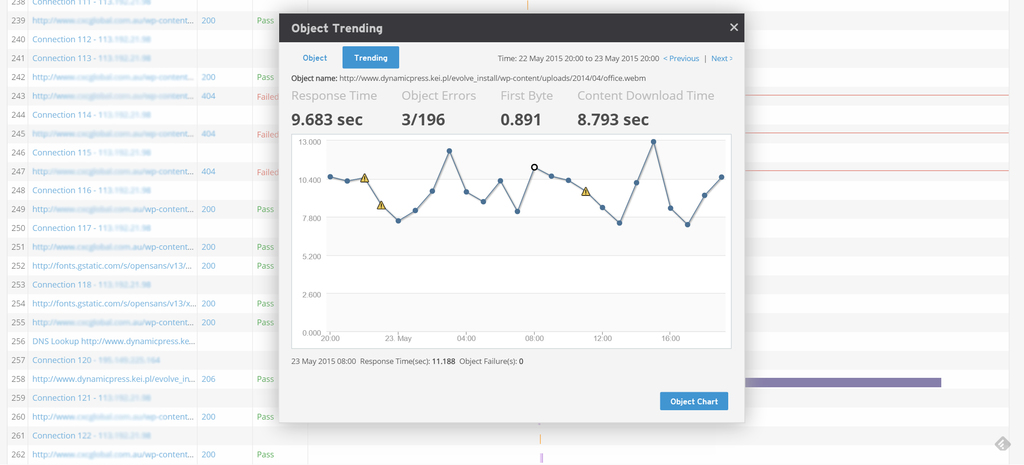

I was also able to look at the object over a period of time to see just how troublesome it was being to the overall site. We can then see what the impact of the third party plugin is over time, using the object trending analysis.

But where to from here?

In a short time I was able to draw some conclusions that pinpointed the issue. I’m now helping her utilise this data to rebuild the site.

The main learnings were:

- The site is poorly constructed and has ongoing response time issues.

- Slow websites face high abandonment rates and user complaints. Our data suggests 40% will abandon after 3 seconds.

- The main problems stem from 3rd party plugins. It’s too easy in WordPress to add features to the site by adding plugins, but you should always understand the impact on performance as a result.

- Don’t make assumptions when there is data available that can pinpoint exact problems.

- Performance monitoring helps you understand what the performance issues are so you can make informed decisions that will actually solve the problem.

- Set benchmarks for how you want your website to perform day in, day out. The average Australian retail load time is 5.9 seconds so use data like this to keep your marketing team and your developers engaged and constantly monitoring performance. As soon as data starts to slip you will then know you have a problem creeping up on you.

Looking for answers?

Start a new discussion or ask for help in our Q&A forum.

Go to forum